RoMa v2 → ONNX + Triton

I've put together a companion repo that exports RoMa v2 to ONNX and serves it via NVIDIA Triton Inference Server:

https://github.com/ilanmotiei/romav2-onnx

All source changes have now been pushed to a public fork at https://github.com/ilanmotiei/RoMaV2 (commit 8de863a).

Happy to incorporate any feedback or open a PR if this would be useful upstream.

What's included

scripts/export_onnx.py — exports any setting (turbo / fast / base) to ONNX using the classic TorchScript JIT tracer. All AMP / bfloat16 paths are disabled so the full graph stays in float32 (required for ORT compatibility). RoPE embeddings are forced to fp32 as well.scripts/export_onnx.py --validate — runs a CPU-vs-CPU comparison between the PyTorch model and the exported ONNX model and asserts they match within atol=0.02.scripts/visualize.py — produces a 6-panel composite showing the warp, confidence map, alpha blend, and dense correspondences.scripts/triton_client.py — lightweight HTTP client for querying a running Triton server.triton/model_repository/romav2/config.pbtxt — ready-to-use Triton model config.

Install

git clone https://github.com/ilanmotiei/romav2-onnx

cd romav2-onnx

pip install ".[all]" # pulls romav2 from this repo as a git dep

Quick start

# Export

python scripts/export_onnx.py --output romav2_fast.onnx --setting fast

# Validate

python scripts/export_onnx.py --validate romav2_fast.onnx --setting fast

# Visualise

python scripts/visualize.py --onnx romav2_fast.onnx --out result.png

# Triton

docker run --rm -d -p 8000:8000 -v $(pwd)/triton/model_repository:/models \

nvcr.io/nvidia/tritonserver:23.12-py3 tritonserver --model-repository=/models

python scripts/triton_client.py assets/toronto_A.jpg assets/toronto_B.jpg \

--url localhost:8000 --out result.png

Example results

Input images (University of Toronto, large viewpoint change):

| Image A |

Image B |

|

|

Matching output (PyTorch / ONNX / Triton — all identical):

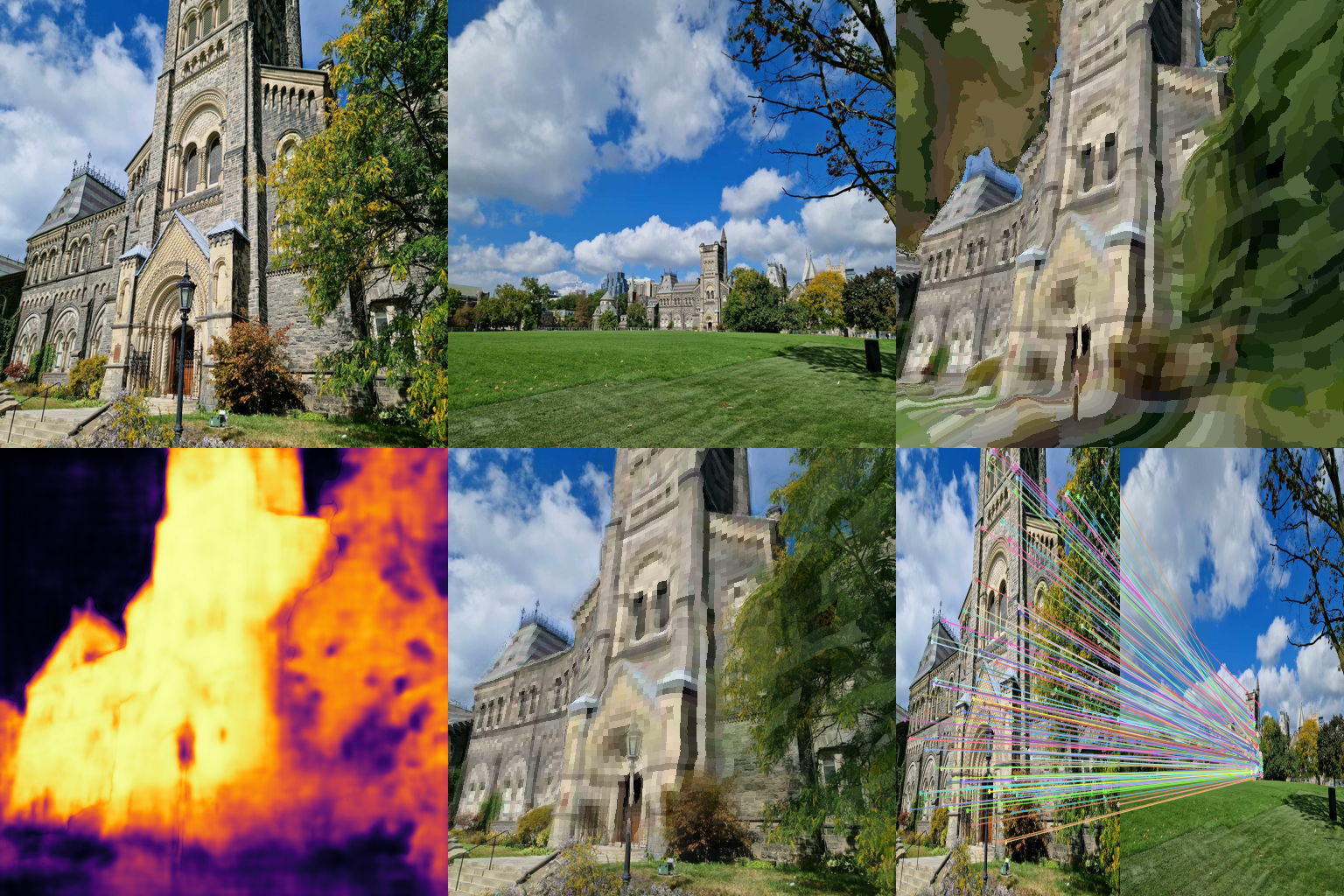

Top-left: Image A · Top-center: Image B · Top-right: Image B warped into A's frame

Bottom-left: Overlap confidence (bright = high) · Bottom-center: Alpha blend · Bottom-right: Dense correspondences

RoMa v2 → ONNX + Triton

I've put together a companion repo that exports RoMa v2 to ONNX and serves it via NVIDIA Triton Inference Server:

https://github.com/ilanmotiei/romav2-onnx

All source changes have now been pushed to a public fork at https://github.com/ilanmotiei/RoMaV2 (commit 8de863a).

Happy to incorporate any feedback or open a PR if this would be useful upstream.

What's included

scripts/export_onnx.py— exports any setting (turbo/fast/base) to ONNX using the classic TorchScript JIT tracer. All AMP / bfloat16 paths are disabled so the full graph stays in float32 (required for ORT compatibility). RoPE embeddings are forced to fp32 as well.scripts/export_onnx.py --validate— runs a CPU-vs-CPU comparison between the PyTorch model and the exported ONNX model and asserts they match withinatol=0.02.scripts/visualize.py— produces a 6-panel composite showing the warp, confidence map, alpha blend, and dense correspondences.scripts/triton_client.py— lightweight HTTP client for querying a running Triton server.triton/model_repository/romav2/config.pbtxt— ready-to-use Triton model config.Install

Quick start

Example results

Input images (University of Toronto, large viewpoint change):

Matching output (PyTorch / ONNX / Triton — all identical):