|

| 1 | +# Hands-on Guide: Setting Up Qwen 2.1 on AWS EC2 with Ollama |

| 2 | + |

| 3 | +This guide walks you through setting up and running Qwen 2.1, a small but efficient LLM, on a free tier AWS EC2 instance using Ollama. |

| 4 | + |

| 5 | +## 1. Creating an EC2 Instance |

| 6 | + |

| 7 | +1. **Log in to AWS Console** |

| 8 | + - Go to https://aws.amazon.com/console/ |

| 9 | + - Create a new free tier account, or sign into your existing account. |

| 10 | + |

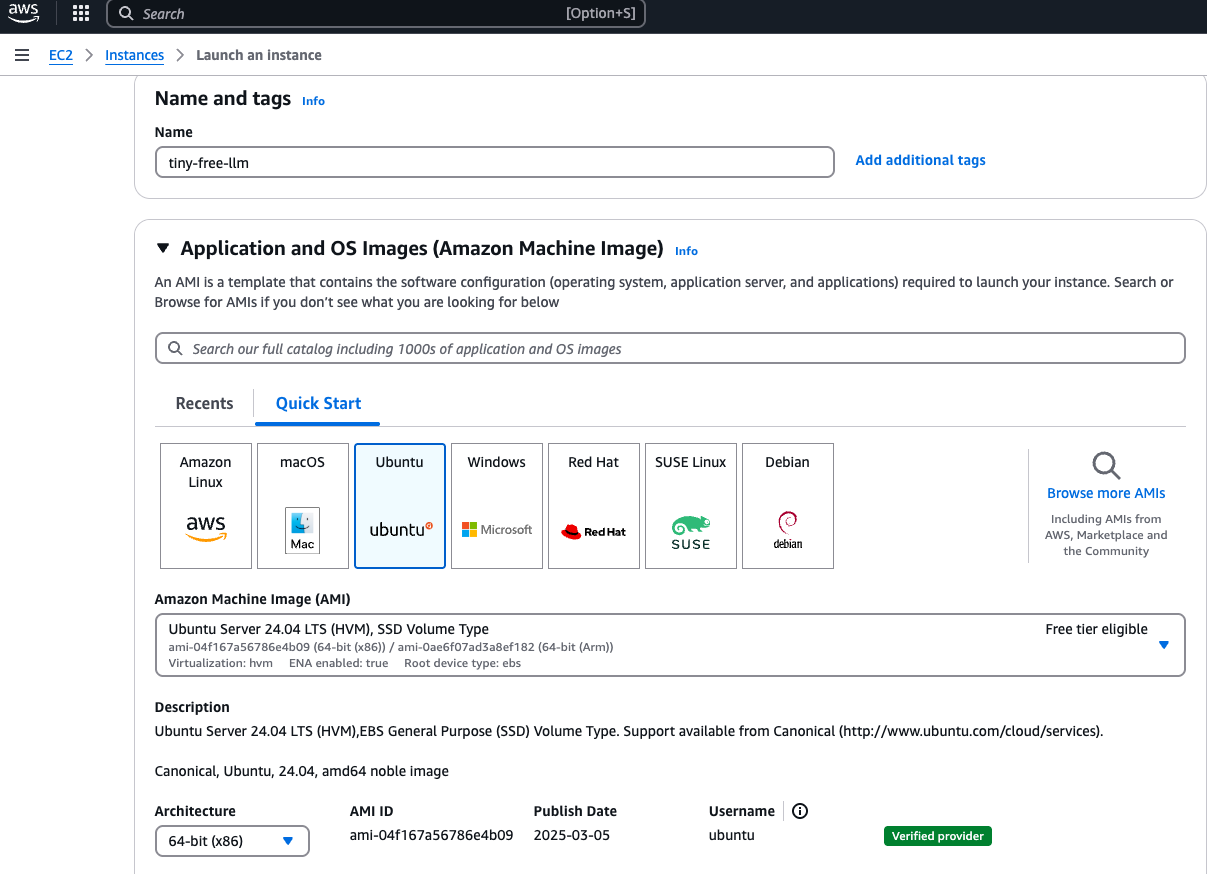

| 11 | +2. **Launch an EC2 Instance** |

| 12 | + - Navigate to EC2 dashboard |

| 13 | + - Click "Launch Instance" |

| 14 | + - **Name**: `ollama-qwen-instance` (or your preferred name) |

| 15 | + - **AMI**: Ubuntu Server 22.04 LTS (free tier eligible) |

| 16 | + - **Architecture**: Choose x86 (64-bit) architecture, not ARM |

| 17 | + |

| 18 | +  |

| 19 | + |

| 20 | + |

| 21 | + 3. - **Instance Type**: t2.micro (minimum recommended, 1 vCPU, 1GB RAM) |

| 22 | + - For better performance: t2.xlarge or t3.xlarge if budget allows |

| 23 | + - **Key Pair**: Create new or select existing SSH key pair |

| 24 | + |

| 25 | +  |

| 26 | + |

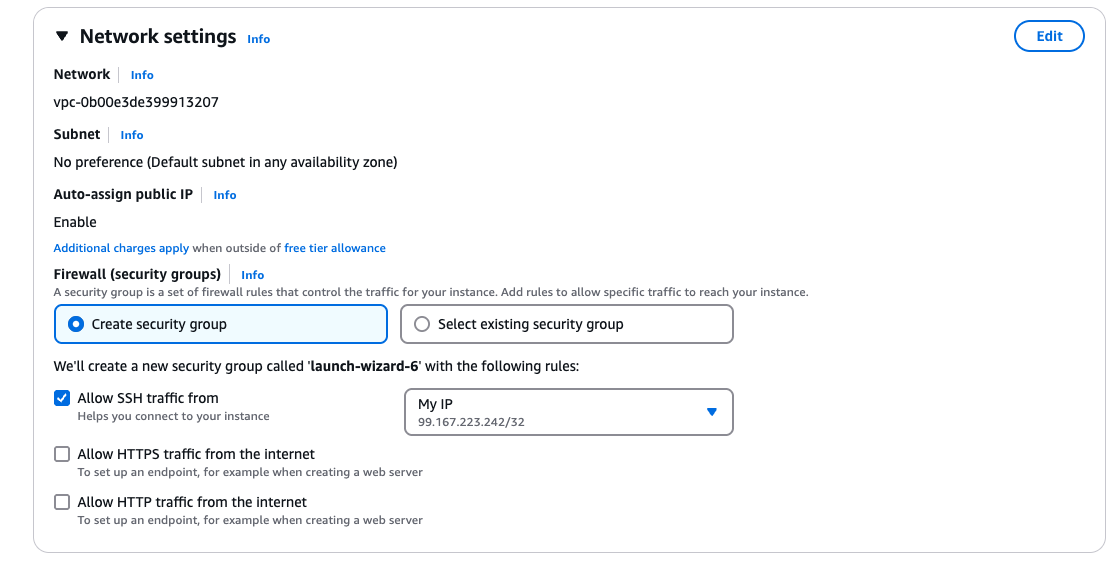

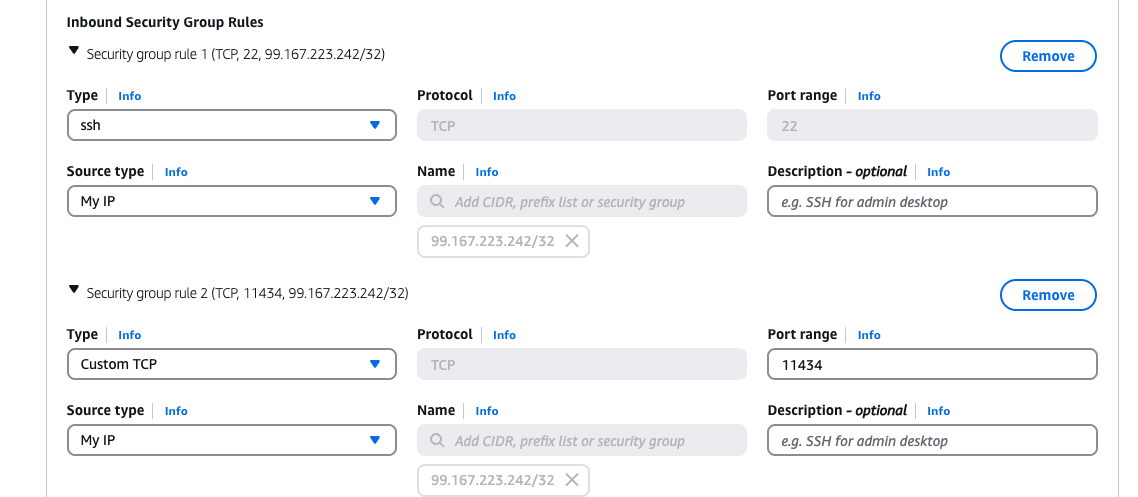

| 27 | + 4. - **Network Settings**: Default VPC, Allow SSH traffic from your IP address. Select "Create security group" and click "Edit" to edit security group. |

| 28 | + - **Security Group**: Create new with the following rules: |

| 29 | + - SSH (port 22) from your IP address |

| 30 | + Click "Add Security Group": |

| 31 | + - Custom TCP (port 11434) from your IP (for Ollama API) |

| 32 | + |

| 33 | + |

| 34 | + |

| 35 | + 5. - **Configure Storage**: 30GB gp3 (free tier maximum, 30GB+ recommended) |

| 36 | + - Click "Launch Instance" |

| 37 | + |

| 38 | +## 2. Connecting to Your Instance |

| 39 | + |

| 40 | +1. **Find your instance public IP** |

| 41 | + - On EC2 dashboard, select your instance |

| 42 | + - Copy the Public IPv4 address |

| 43 | + |

| 44 | +2. **Connect via SSH** |

| 45 | + - On macOS/Linux terminal: |

| 46 | + ```bash |

| 47 | + chmod 400 your-key-pair.pem |

| 48 | + ssh -i your-key-pair.pem ubuntu@your-public-ip |

| 49 | + ``` |

| 50 | + - On Windows, use PuTTY or Windows Terminal |

| 51 | + |

| 52 | +## 3. Installing Ollama |

| 53 | + |

| 54 | +1. **Update system packages** |

| 55 | + ```bash |

| 56 | + sudo apt update && sudo apt upgrade -y |

| 57 | + ``` |

| 58 | + |

| 59 | +2. **Install required dependencies** |

| 60 | + ```bash |

| 61 | + sudo apt install -y curl wget |

| 62 | + ``` |

| 63 | + |

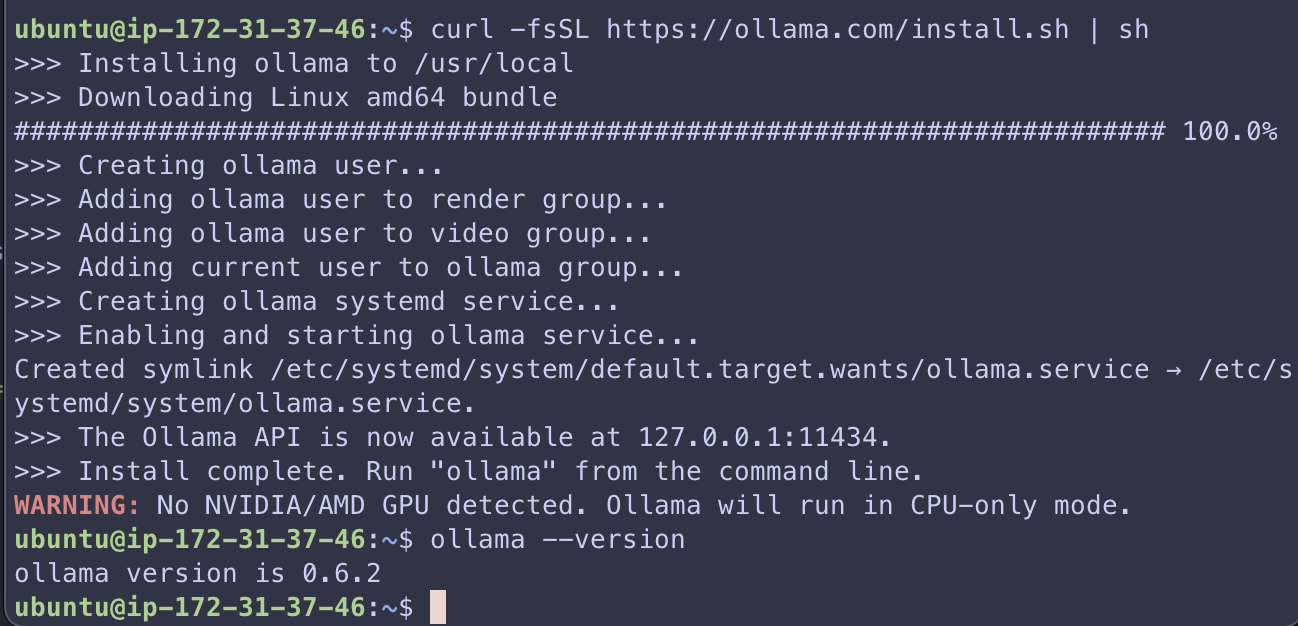

| 64 | +3. **Install Ollama** |

| 65 | + ```bash |

| 66 | + curl -fsSL https://ollama.com/install.sh | sh |

| 67 | + ``` |

| 68 | + |

| 69 | +4. **Verify installation** |

| 70 | + ```bash |

| 71 | + ollama --version |

| 72 | + ``` |

| 73 | + |

| 74 | + |

| 75 | + |

| 76 | + |

| 77 | +## 4. Setting Up and Running Qwen 2.1 |

| 78 | + |

| 79 | +1. **Pull the Qwen 2.1 model** |

| 80 | + ```bash |

| 81 | + # For free tier (faster but less capable) |

| 82 | + # ollama pull qwen2:0.5b |

| 83 | +

|

| 84 | + # For 7B parameter model (recommended for t2.large) |

| 85 | + ollama pull qwen2:7b |

| 86 | + ``` |

| 87 | + This will download and set up the model (may take several minutes) |

| 88 | + |

| 89 | +2. **Test the model with a simple prompt** |

| 90 | + ```bash |

| 91 | + ollama run qwen2:0.5b "Explain the concept of product engineering for AI in 3 sentences" |

| 92 | + ``` |

| 93 | + |

| 94 | +## 5. Running Ollama as a Service |

| 95 | + |

| 96 | +1. **Start Ollama service** |

| 97 | + ```bash |

| 98 | + sudo systemctl start ollama |

| 99 | + ``` |

| 100 | + |

| 101 | +2. **Enable Ollama to start on boot** |

| 102 | + ```bash |

| 103 | + sudo systemctl enable ollama |

| 104 | + ``` |

| 105 | + |

| 106 | +3. **Check service status** |

| 107 | + ```bash |

| 108 | + sudo systemctl status ollama |

| 109 | + ``` |

| 110 | + |

| 111 | +## 6. Using the Ollama API |

| 112 | + |

| 113 | +Ollama provides a REST API that you can use for more advanced usage: |

| 114 | + |

| 115 | +1. **Generate a response** |

| 116 | + ```bash |

| 117 | + curl -X POST http://localhost:11434/api/generate -d '{ |

| 118 | + "model": "qwen2:7b", |

| 119 | + "prompt": "What are three key considerations when building AI products?", |

| 120 | + "stream": false |

| 121 | + }' |

| 122 | + ``` |

| 123 | + |

| 124 | +2. **List available models** |

| 125 | + ```bash |

| 126 | + curl http://localhost:11434/api/tags |

| 127 | + ``` |

| 128 | + |

| 129 | +## 7. Performance Optimization |

| 130 | + |

| 131 | +1. **Monitor memory usage** |

| 132 | + ```bash |

| 133 | + watch -n 1 free -h |

| 134 | + ``` |

| 135 | + |

| 136 | +2. **Create swap space if needed** |

| 137 | + ```bash |

| 138 | + sudo fallocate -l 8G /swapfile |

| 139 | + sudo chmod 600 /swapfile |

| 140 | + sudo mkswap /swapfile |

| 141 | + sudo swapon /swapfile |

| 142 | + echo '/swapfile none swap sw 0 0' | sudo tee -a /etc/fstab |

| 143 | + ``` |

| 144 | + |

| 145 | +## 8. Running Ollama in the Background |

| 146 | + |

| 147 | +If you want to keep Ollama running after disconnecting: |

| 148 | + |

| 149 | +```bash |

| 150 | +nohup ollama serve > ollama.log 2>&1 & |

| 151 | +``` |

| 152 | + |

| 153 | +## 9. Basic Prompt Examples |

| 154 | + |

| 155 | +Try these prompts to test your Qwen 2.1 model: |

| 156 | + |

| 157 | + |

| 158 | + |

| 159 | +## 10. Cleaning Up |

| 160 | + |

| 161 | +When you're done, remember to stop or terminate your EC2 instance to avoid unnecessary charges: |

| 162 | +

|

| 163 | +1. In the EC2 dashboard, select your instance |

| 164 | +2. Choose "Instance state" and select "Stop" or "Terminate" |

| 165 | +

|

| 166 | +**Note**: Stopping the instance will preserve your data but still incur storage costs. Terminating the instance will delete all data. |

0 commit comments