This case study demonstrates MassGen's ability to achieve unanimous consensus on a complex technical pricing query, showcasing how three different AI agents can converge on a comprehensive, well-researched answer through collaborative analysis. This case study was run on version v0.0.3.

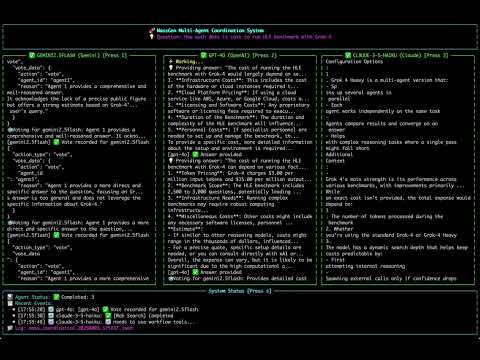

massgen --config @examples/basic/multi/gemini_4o_claude "How much does it cost to run HLE benchmark with Grok-4"Prompt: How much does it cost to run HLE benchmark with Grok-4

- Agent 1: gemini2.5flash (Designated Winner)

- Agent 2: gpt-4o

- Agent 3: claude-3-5-haiku

Watch the recorded demo:

Duration: 162.0s | 826 chunks | 18 events

Each agent approached the complex pricing question from different analytical perspectives:

-

Agent 1 (gemini2.5flash) conducted comprehensive web research and immediately focused on token-based pricing specifics, gathering detailed information about Grok-4's API costs ($3.00 per million input tokens, $15.00 per million output tokens) and contextualizing these with real-world benchmarking cost examples.

-

Agent 2 (gpt-4o) initially provided a more general infrastructure-focused answer covering cloud costs, hardware requirements, and setup considerations. However, it later refined its response to include specific token pricing and benchmark scope details, demonstrating adaptive learning.

-

Agent 3 (claude-3-5-haiku) performed extensive iterative research with multiple web searches, continuously refining its understanding of both the HLE benchmark structure (2,500-3,000 PhD-level questions) and Grok-4's various configuration options (standard vs. Heavy multi-agent mode).

A key strength demonstrated in this session was the agents' commitment to thorough research:

- Agent 3 conducted three separate web searches, each time deepening its understanding and providing more precise details about benchmark costs and model configurations

- Agent 1 integrated comparative cost data from similar reasoning model benchmarks, citing specific examples like Artificial Analysis's $2,767 cost for evaluating OpenAI's o1 model

- All agents recognized the importance of token consumption patterns for reasoning models, which generate significantly more tokens than standard models

The voting process revealed unanimous recognition of Agent 1's superior comprehensive analysis:

- Agent 1 voted for itself, citing its comprehensive and well-reasoned approach with strong cost estimates

- Agent 2 voted for Agent 1, recognizing its "detailed cost analysis and comparison to other reasoning benchmarks, including specific token cost breakdown"

- Agent 3 voted for Agent 1, praising its "more comprehensive and detailed explanation" with "comparative insights from similar benchmarking efforts"

This resulted in a unanimous 3-0 consensus, demonstrating clear quality differentiation.

Agent 1 presented the final response, featuring:

- Precise pricing structure: $3.00 per million input tokens, $15.00 per million output tokens, with blended rate of $6.00 per million tokens

- Comprehensive benchmark context: 2,500-3,000 expert-crafted questions across multiple disciplines, with 10-14% requiring multi-modal comprehension

- Real-world cost comparisons: Cited specific examples including Artificial Analysis's $2,767 cost for o1 evaluation and $5,200 for testing a dozen reasoning models

- Performance considerations: Detailed breakdown of Grok-4's accuracy rates (26.9% without tools, 41.0% with tools, 50.7% in Heavy configuration)

- Practical cost estimate: "Hundreds to thousands of dollars" range based on token consumption patterns

This case study exemplifies MassGen's effectiveness in handling complex technical queries requiring both research depth and practical analysis. The unanimous consensus emerged not from simple agreement, but from all agents recognizing the superior quality of Agent 1's comprehensive, well-sourced, and contextually rich response. The system successfully synthesized technical pricing information, benchmark specifications, and real-world cost comparisons into a definitive answer that addresses both the specific query and provides valuable context for decision-making. This demonstrates MassGen's strength in achieving research-driven consensus on complex technical topics where accuracy and comprehensiveness are paramount.