-

Notifications

You must be signed in to change notification settings - Fork 1.4k

Description

Describe the bug

We have a setup with 3 Kafka nodes. We have a system A producing records to Kafka, which are consumed by a system B. System B does some processing and produces response records to be consumed by system A.

We are experiencing unexpected higher latencies end-to-end latencies when using Strimzi and performing a rolling upgrade. We are using the default configurations.

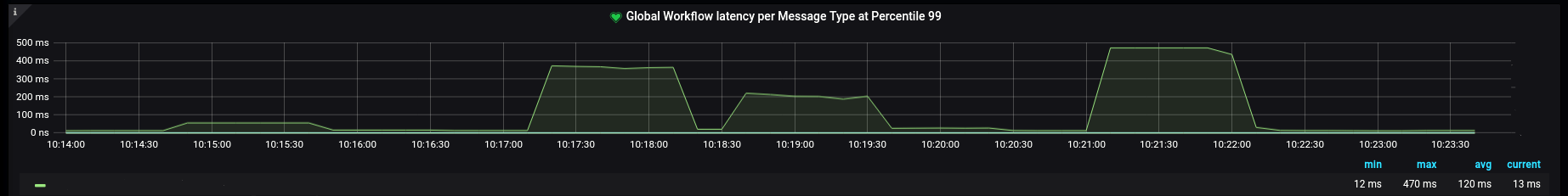

End-to-end latency (measured at system A):

We have tried doing a manual restart of the Kafka pods (by killing the Kafka process PID (with a SIGTERM) for each pod and waiting for the latencies to stabilize) and we are not seeing the same behaviour.

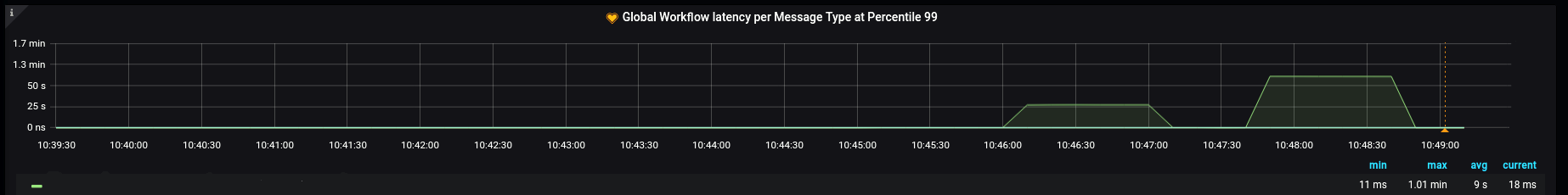

End-to-end latency (measured at system A:

While it might be expected that during a rolling upgrade we see a spike in latencies, we were not expecting to see such a big difference between the manual restarts and the rolling upgrade.

To Reproduce

We are reproducing by just triggering a rolling upgrade (with no changes).

Expected behavior

We are expecting at least to have similar latencies to when we perform the restarts manually, but we also don't know whether this is expected behaviour using Kafka.

Environment (please complete the following information):

- Strimzi version: 0.31.1

- Installation method: Helm chart (via Flux)

- Kubernetes cluster: 1.23+

- Infrastructure: Amazon EKS

YAML files and logs

Kafka cluster:

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

name: kafka

spec:

kafka:

version: 3.2.3

replicas: 3

config:

offsets.topic.replication.factor: 3

transaction.state.log.replication.factor: 3

transaction.state.log.min.isr: 2

default.replication.factor: 3

min.insync.replicas: 2

inter.broker.protocol.version: "3.2"

template:

pod:

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 1001

fsGroup: 1001

fsGroupChangePolicy: "OnRootMismatch"

seccompProfile:

type: RuntimeDefault

kafkaContainer:

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 1001

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

capabilities:

drop:

- all

resources:

requests:

cpu: 5000m

memory: 14336Mi

limits:

cpu: 5000m

memory: 14336Mi

storage:

type: jbod

volumes:

- id: 0

type: persistent-claim

size: 20Gi

deleteClaim: false

listeners:

- name: plain

port: 9092

type: internal

tls: false

- name: tls

port: 9093

type: internal

tls: true

metricsConfig:

type: jmxPrometheusExporter

valueFrom:

configMapKeyRef:

name: kafka-metrics

key: kafka-metrics-config.yml

zookeeper:

replicas: 3

template:

pod:

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 1001

fsGroup: 1001

fsGroupChangePolicy: "OnRootMismatch"

seccompProfile:

type: RuntimeDefault

zookeeperContainer:

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 1001

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

capabilities:

drop:

- all

resources:

requests:

cpu: 500m

memory: 500Mi

limits:

cpu: 500m

memory: 500Mi

storage:

type: persistent-claim

size: 100Gi

deleteClaim: false

metricsConfig:

type: jmxPrometheusExporter

valueFrom:

configMapKeyRef:

name: kafka-metrics

key: zookeeper-metrics-config.yml

kafkaExporter:

groupRegex: ".*"

topicRegex: ".*"

resources:

requests:

cpu: 200m

memory: 100Mi

limits:

cpu: 200m

memory: 100Mi

template:

pod:

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 1001

fsGroup: 1001

fsGroupChangePolicy: "OnRootMismatch"

seccompProfile:

type: RuntimeDefault

container:

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 1001

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

capabilities:

drop:

- all

Additional context

- Kafka cluster has 3 nodes as you can see above. Our topic replication factor is 3 and minISR is 2. All of our topics only have one partition.

- We are using Kafka default configurations for our producers. For our use case we do not need auto commits, so we have disabled that for our consumers. Otherwise, we are using the default configurations for our consumers, too.