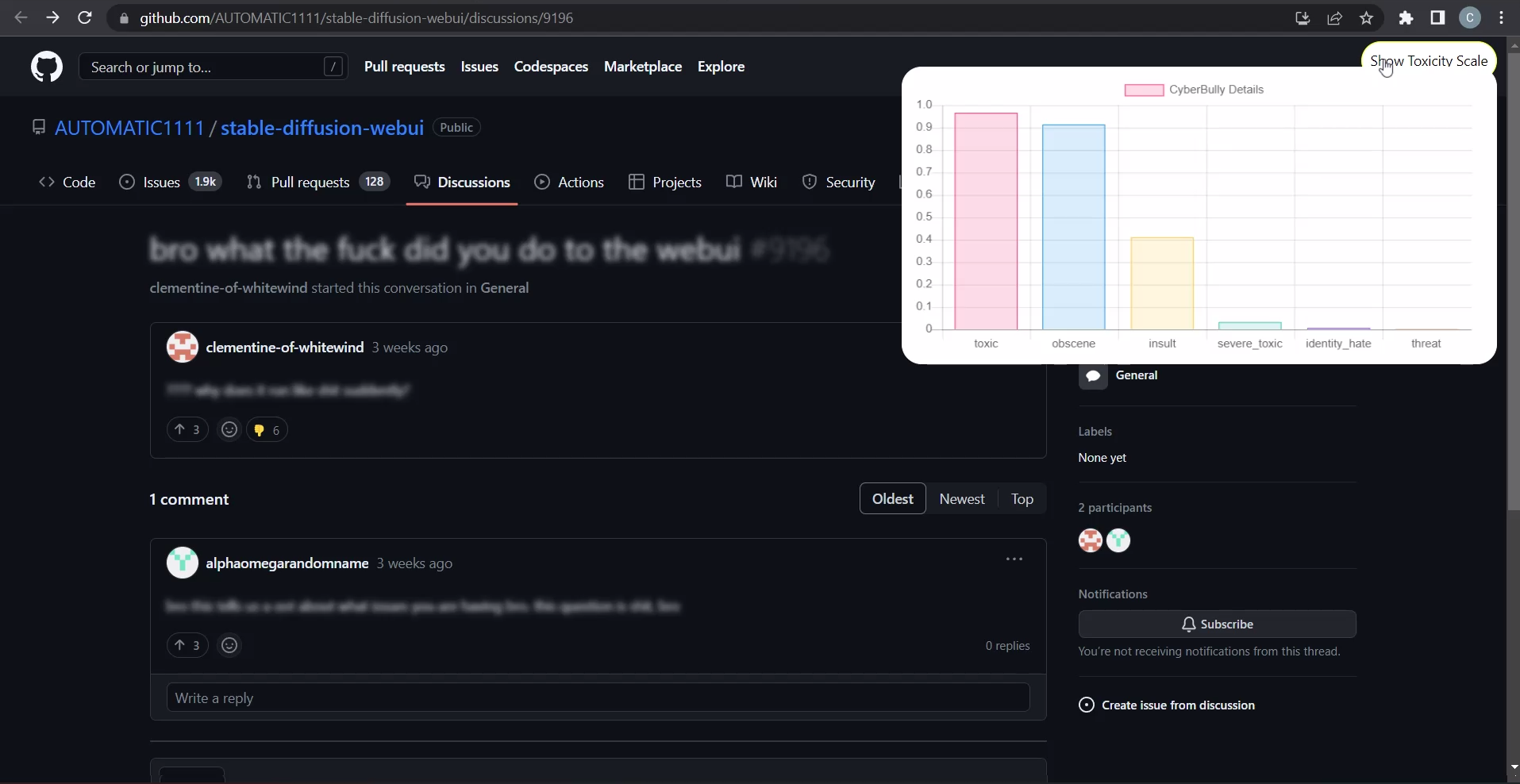

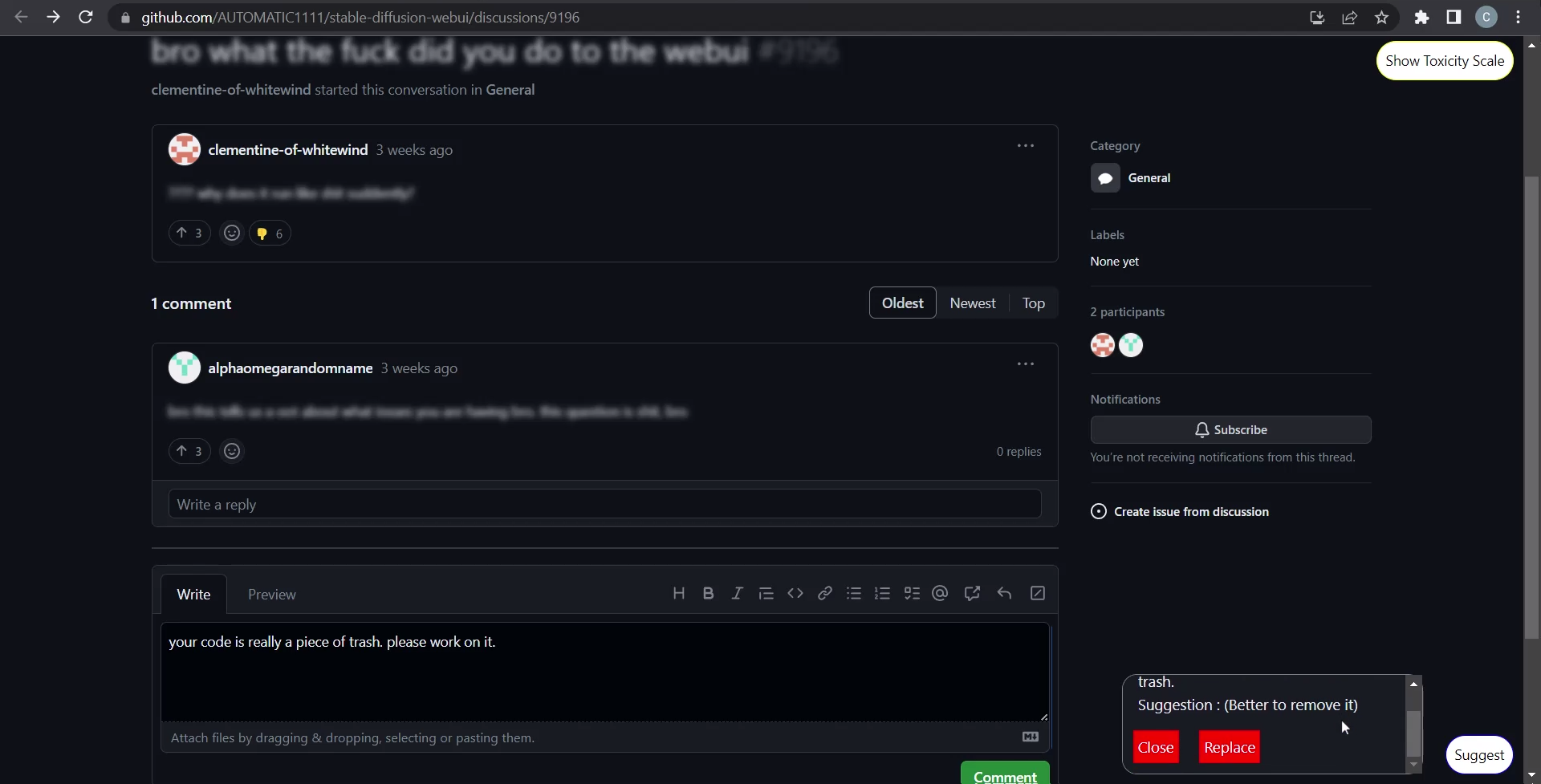

ToxiCheck is a Google Chrome Extension which primarily targets software developer websites such as Github , detects cyberbullying on them, and provides toxicity reports on comments. Along with this , Toxicheck also assists the user in avoiding the use of toxic language by suggesting gentler alternatives as they type.

Models used -

UI -

- Vanilla JS

- Chart JS

Working of the Bullying Classifier (Text Blurring Mechanism)

Working of the Autosuggestor (Suggestion Mechanism)

- Clone the repository

- Enter the directory Toxicheck and type

$ npm install

- Edit the bearer token inside the app.js file with your huggingface (write enabled) token.

- Go to chrome browser and type

chrome://extensions/

- Click on Load Unpacked option and browse to the folder Toxicheck and select it.

- Enable/Reload the extension

- Navigate to the websites you wish