WIP Migrate doc update metadata config#14273

WIP Migrate doc update metadata config#14273xugangqiang wants to merge 7 commits intoinfiniflow:mainfrom

Conversation

📝 WalkthroughWalkthroughConsolidated document management endpoints from Changes

Estimated code review effort🎯 4 (Complex) | ⏱️ ~60 minutes Possibly related PRs

Suggested labels

Suggested reviewers

Poem

🚥 Pre-merge checks | ✅ 2 | ❌ 3❌ Failed checks (2 warnings, 1 inconclusive)

✅ Passed checks (2 passed)

✏️ Tip: You can configure your own custom pre-merge checks in the settings. ✨ Finishing Touches⚔️ Resolve merge conflicts

Thanks for using CodeRabbit! It's free for OSS, and your support helps us grow. If you like it, consider giving us a shout-out. Comment |

There was a problem hiding this comment.

Actionable comments posted: 6

Caution

Some comments are outside the diff and can’t be posted inline due to platform limitations.

⚠️ Outside diff range comments (3)

docker/.env (2)

28-174:⚠️ Potential issue | 🟡 MinorAvoid redefining

COMPOSE_PROFILES.The second assignment at Line 174 is flagged by

dotenv-linterand can make tooling keep only the last value. Merge the default TEI profile into the original assignment instead.Proposed fix

-COMPOSE_PROFILES=${DOC_ENGINE},${DEVICE} +COMPOSE_PROFILES=${DOC_ENGINE},${DEVICE},tei-cpu ... -COMPOSE_PROFILES=${COMPOSE_PROFILES},tei-cpu +# COMPOSE_PROFILES=${COMPOSE_PROFILES},tei-cpu🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@docker/.env` around lines 28 - 174, The COMPOSE_PROFILES variable is being redefined (duplicate assignments of COMPOSE_PROFILES); remove the later reassignment and instead merge the default tei profile into the original declaration by appending ",tei-cpu" (or conditionally include it via existing expansion) so COMPOSE_PROFILES is set once; update the first COMPOSE_PROFILES line (symbol: COMPOSE_PROFILES) to include the tei-cpu profile and delete the trailing COMPOSE_PROFILES=${COMPOSE_PROFILES},tei-cpu assignment.

20-20:⚠️ Potential issue | 🟠 MajorSet the default document engine to Infinity.

docker/.envstill defaults to Elasticsearch, so the default compose profile does not switch to Infinity.Proposed fix

-DOC_ENGINE=${DOC_ENGINE:-elasticsearch} +DOC_ENGINE=infinityAs per coding guidelines,

docker/.env: SetDOC_ENGINE=infinityindocker/.envto switch from Elasticsearch to Infinity engine.🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@docker/.env` at line 20, Update the default DOC_ENGINE value in docker/.env so the compose profile uses Infinity by default: change the DOC_ENGINE fallback from "elasticsearch" to "infinity" (modify the line containing DOC_ENGINE=${DOC_ENGINE:-elasticsearch} to use "infinity" instead) so the environment variable DOC_ENGINE defaults to infinity when not set.web/src/pages/dataset/components/metedata/hooks/use-manage-modal.ts (1)

435-452:⚠️ Potential issue | 🟠 MajorAdd

idto dependency array and handle bothdocumentIdandidproperties inotherData.The condition checks

otherData?.documentId, but table callers passrecord: row.originalwhere the document object has propertyid, notdocumentId. This causes the metadata save to silently skip when called from the dataset table. Also,idfromuseParams()is used in the request but missing from the dependency array (line 452), which can cause stale dataset IDs in the closure.Proposed fix

// otherData contains: id (document_id), kb_id (dataset_id) - if (otherData?.documentId && id) { + const documentId = otherData?.documentId ?? otherData?.id; + if (documentId && id) { const { data: res } = await updateDocumentMetaDataConfig({ kb_id: id, - doc_id: otherData.documentId, + doc_id: documentId, data: { metadata: data, }, }); if (res.code === 0) { message.success(t('message.operated')); callback?.(); } } return data; }, - [tableData, t, otherData], + [tableData, t, otherData, id],🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@web/src/pages/dataset/components/metedata/hooks/use-manage-modal.ts` around lines 435 - 452, The save flow skips when otherData uses `id` and may capture a stale `id` from useParams; update the condition and payload to accept either property and add `id` to the hook deps: inside the block that calls updateDocumentMetaDataConfig, use const docId = otherData?.documentId ?? otherData?.id and check if (docId && id) before calling updateDocumentMetaDataConfig({ kb_id: id, doc_id: docId, data: { metadata: data } }), and update the dependency array for the enclosing hook to include id (e.g., [tableData, t, otherData, id]); references: otherData, id, updateDocumentMetaDataConfig in use-manage-modal.ts.

🧹 Nitpick comments (1)

test/testcases/test_web_api/test_common.py (1)

417-435: Use the existingDATASETS_URLconstant for consistency.Other functions in this file reference

DATASETS_URL/HOST_ADDRESS{DATASETS_URL}; hardcoding/api/v1/datasets/...here makes the endpoint drift from the rest of the helpers ifVERSIONchanges.♻️ Suggested refactor

res = requests.put( - url=f"{HOST_ADDRESS}/api/v1/datasets/{dataset_id}/documents/{document_id}/metadata/config", + url=f"{HOST_ADDRESS}{DATASETS_URL}/{dataset_id}/documents/{document_id}/metadata/config", headers=headers, auth=auth, json=payload, data=data )🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@test/testcases/test_web_api/test_common.py` around lines 417 - 435, The helper function document_update_metadata_config hardcodes the path "/api/v1/datasets/..."; change it to use the existing DATASETS_URL constant (combine HOST_ADDRESS and DATASETS_URL) so the endpoint stays consistent if VERSION/DATASETS_URL changes—update the url argument in document_update_metadata_config to f"{HOST_ADDRESS}{DATASETS_URL}/{dataset_id}/documents/{document_id}/metadata/config" (preserve using headers, auth, json, data and returning res.json()).

🤖 Prompt for all review comments with AI agents

Verify each finding against the current code and only fix it if needed.

Inline comments:

In `@api/apps/restful_apis/document_api.py`:

- Around line 738-743: The deduplication branch is inverted: when

check_duplicate_ids(doc_ids, "document") returns duplicate_messages you should

replace doc_ids with unique_doc_ids instead of keeping the original duplicated

list. Update the block that calls check_duplicate_ids so that if

duplicate_messages is truthy you assign doc_ids = unique_doc_ids (and still log

the warning), and if duplicate_messages is falsy you leave doc_ids as-is;

alternatively remove this redundant check entirely since

DeleteDocumentReq.validate_ids already rejects duplicates. Target the

check_duplicate_ids call and the surrounding assignment/branch to implement the

fix.

- Around line 855-870: Replace the ownership check that calls

KnowledgebaseService.query(...) with the same permission helper used elsewhere:

KnowledgebaseService.accessible(kb_id=dataset_id, user_id=tenant_id) so

team-shared datasets are allowed; then, after calling req = await

get_request_json(), guard against a None/non-dict body (e.g., if not req or not

isinstance(req, dict): return get_error_argument_result(message="metadata is

required")) before checking "metadata" in req; keep the existing

DocumentService.query(id=document_id, kb_id=dataset_id) and doc = doc[0] logic

intact.

In `@api/utils/validation_utils.py`:

- Line 821: DeleteDocumentReq currently allows empty targets and ambiguous

payloads; update the DeleteDocumentReq schema (class DeleteDocumentReq that

inherits DeleteReq) to add validation that rejects requests where both ids is

missing/empty and delete_all is not true, and also rejects requests where

delete_all is true while ids is provided (non-empty). Implement this as a

schema-level validator (e.g., a Pydantic root_validator or equivalent) on

DeleteDocumentReq that raises a validation error with a clear message when (1)

neither ids nor delete_all specify a target, (2) ids is an empty list, or (3)

delete_all is true and ids is present, ensuring the API rejects missing or

conflicting delete targets at the schema boundary.

In `@web/src/hooks/use-document-request.ts`:

- Around line 319-328: The deletion path only uses const { id: datasetId } =

useParams() which drops the query-param driven fallback; update the params

destructuring to include the alternate key (e.g., const { id, knowledgeId } =

useParams()) and compute datasetId = knowledgeId || id, then pass that datasetId

into deleteDocument inside the useMutation mutationFn (which references

deleteDocument and mutateAsync) so it uses the same knowledgeId||id fallback as

useFetchDocumentList.

---

Outside diff comments:

In `@docker/.env`:

- Around line 28-174: The COMPOSE_PROFILES variable is being redefined

(duplicate assignments of COMPOSE_PROFILES); remove the later reassignment and

instead merge the default tei profile into the original declaration by appending

",tei-cpu" (or conditionally include it via existing expansion) so

COMPOSE_PROFILES is set once; update the first COMPOSE_PROFILES line (symbol:

COMPOSE_PROFILES) to include the tei-cpu profile and delete the trailing

COMPOSE_PROFILES=${COMPOSE_PROFILES},tei-cpu assignment.

- Line 20: Update the default DOC_ENGINE value in docker/.env so the compose

profile uses Infinity by default: change the DOC_ENGINE fallback from

"elasticsearch" to "infinity" (modify the line containing

DOC_ENGINE=${DOC_ENGINE:-elasticsearch} to use "infinity" instead) so the

environment variable DOC_ENGINE defaults to infinity when not set.

In `@web/src/pages/dataset/components/metedata/hooks/use-manage-modal.ts`:

- Around line 435-452: The save flow skips when otherData uses `id` and may

capture a stale `id` from useParams; update the condition and payload to accept

either property and add `id` to the hook deps: inside the block that calls

updateDocumentMetaDataConfig, use const docId = otherData?.documentId ??

otherData?.id and check if (docId && id) before calling

updateDocumentMetaDataConfig({ kb_id: id, doc_id: docId, data: { metadata: data

} }), and update the dependency array for the enclosing hook to include id

(e.g., [tableData, t, otherData, id]); references: otherData, id,

updateDocumentMetaDataConfig in use-manage-modal.ts.

---

Nitpick comments:

In `@test/testcases/test_web_api/test_common.py`:

- Around line 417-435: The helper function document_update_metadata_config

hardcodes the path "/api/v1/datasets/..."; change it to use the existing

DATASETS_URL constant (combine HOST_ADDRESS and DATASETS_URL) so the endpoint

stays consistent if VERSION/DATASETS_URL changes—update the url argument in

document_update_metadata_config to

f"{HOST_ADDRESS}{DATASETS_URL}/{dataset_id}/documents/{document_id}/metadata/config"

(preserve using headers, auth, json, data and returning res.json()).

🪄 Autofix (Beta)

Fix all unresolved CodeRabbit comments on this PR:

- Push a commit to this branch (recommended)

- Create a new PR with the fixes

ℹ️ Review info

⚙️ Run configuration

Configuration used: Organization UI

Review profile: CHILL

Plan: Pro

Run ID: 1c054373-180b-44e8-9b61-92c84ecd9723

📒 Files selected for processing (17)

api/apps/document_app.pyapi/apps/restful_apis/document_api.pyapi/apps/sdk/doc.pyapi/utils/validation_utils.pydocker/.envtest/testcases/test_http_api/test_file_management_within_dataset/test_delete_documents.pytest/testcases/test_http_api/test_file_management_within_dataset/test_doc_sdk_routes_unit.pytest/testcases/test_sdk_api/test_file_management_within_dataset/test_delete_documents.pytest/testcases/test_web_api/test_chunk_app/test_create_chunk.pytest/testcases/test_web_api/test_chunk_app/test_update_chunk.pytest/testcases/test_web_api/test_common.pytest/testcases/test_web_api/test_document_app/conftest.pytest/testcases/test_web_api/test_document_app/test_rm_documents.pyweb/src/hooks/use-document-request.tsweb/src/pages/dataset/components/metedata/hooks/use-manage-modal.tsweb/src/services/knowledge-service.tsweb/src/utils/api.ts

💤 Files with no reviewable changes (2)

- api/apps/document_app.py

- test/testcases/test_http_api/test_file_management_within_dataset/test_doc_sdk_routes_unit.py

| # make sure each id is unique | ||

| unique_doc_ids, duplicate_messages = check_duplicate_ids(doc_ids, "document") | ||

| if duplicate_messages: | ||

| logging.warning(f"duplicate_messages:{duplicate_messages}") | ||

| else: | ||

| doc_ids = unique_doc_ids |

There was a problem hiding this comment.

Inverted dedup logic: duplicates are kept, uniques are discarded.

When check_duplicate_ids reports duplicates, the code logs a warning and proceeds with the original (duplicated) doc_ids; only when there are no duplicates does it assign unique_doc_ids. The branches are reversed — on duplicates you should be using unique_doc_ids, not the unfiltered list.

In practice this is masked today because DeleteDocumentReq.validate_ids already rejects duplicates with a 101 error before reaching this block, making this code effectively dead. Either fix the inversion or drop the redundant check.

🛠️ Proposed fix

- # make sure each id is unique

- unique_doc_ids, duplicate_messages = check_duplicate_ids(doc_ids, "document")

- if duplicate_messages:

- logging.warning(f"duplicate_messages:{duplicate_messages}")

- else:

- doc_ids = unique_doc_ids

+ # make sure each id is unique

+ unique_doc_ids, duplicate_messages = check_duplicate_ids(doc_ids, "document")

+ if duplicate_messages:

+ logging.warning(f"duplicate_messages:{duplicate_messages}")

+ doc_ids = unique_doc_ids🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@api/apps/restful_apis/document_api.py` around lines 738 - 743, The

deduplication branch is inverted: when check_duplicate_ids(doc_ids, "document")

returns duplicate_messages you should replace doc_ids with unique_doc_ids

instead of keeping the original duplicated list. Update the block that calls

check_duplicate_ids so that if duplicate_messages is truthy you assign doc_ids =

unique_doc_ids (and still log the warning), and if duplicate_messages is falsy

you leave doc_ids as-is; alternatively remove this redundant check entirely

since DeleteDocumentReq.validate_ids already rejects duplicates. Target the

check_duplicate_ids call and the surrounding assignment/branch to implement the

fix.

|

|

||

| # Delete documents using existing FileService.delete_docs | ||

| errors = await thread_pool_exec(FileService.delete_docs, doc_ids, tenant_id) | ||

|

|

||

| if errors: | ||

| return get_error_data_result(message=str(errors)) | ||

|

|

||

| return get_result(data={"deleted": len(doc_ids)}) | ||

| except Exception as e: | ||

| logging.exception(e) | ||

| return get_error_data_result(message="Internal server error") |

There was a problem hiding this comment.

Partial-failure semantics and observability.

FileService.delete_docs iterates sequentially and accumulates errors into a single string while still committing successful deletions. When errors is truthy the endpoint returns a generic error, but some documents may already be deleted — the client sees a failure without any indication of which IDs succeeded. Consider returning structured per-id results (e.g., {"deleted": [...], "failed": [...]}) so callers can reconcile state, and add an logging.info for the deletion flow as required by the repo guideline to add logging for new flows.

As per coding guidelines: "Add logging for new flows".

|

|

||

| @manager.route("/datasets/<dataset_id>/documents/<document_id>/metadata/config",methods=["PUT"]) # noqa: F821 | ||

| @login_required | ||

| @add_tenant_id_to_kwargs | ||

| async def update_metadata_config(tenant_id, dataset_id, document_id): | ||

| """ | ||

| Update document metadata configuration. | ||

| --- | ||

| tags: | ||

| - Documents | ||

| security: | ||

| - ApiKeyAuth: [] | ||

| parameters: | ||

| - in: path | ||

| name: dataset_id | ||

| type: string | ||

| required: true | ||

| description: ID of the dataset. | ||

| - in: path | ||

| name: document_id | ||

| type: string | ||

| required: true | ||

| description: ID of the document. | ||

| - in: header | ||

| name: Authorization | ||

| type: string | ||

| required: true | ||

| description: Bearer token for authentication. | ||

| - in: body | ||

| name: body | ||

| description: Metadata configuration. | ||

| required: true | ||

| schema: | ||

| type: object | ||

| properties: | ||

| metadata: | ||

| type: object | ||

| description: Metadata configuration JSON. | ||

| responses: | ||

| 200: | ||

| description: Document updated successfully. | ||

| """ | ||

| # Verify ownership and existence of dataset | ||

| if not KnowledgebaseService.query(id=dataset_id, tenant_id=tenant_id): | ||

| return get_error_data_result(message="You don't own the dataset.") | ||

|

|

||

| # Verify document exists in the dataset | ||

| doc = DocumentService.query(id=document_id, kb_id=dataset_id) | ||

| if not doc: | ||

| return get_error_data_result( | ||

| message=f"Document {document_id} not found in dataset {dataset_id}" | ||

| ) | ||

| doc = doc[0] | ||

|

|

||

| # Get request body | ||

| req = await get_request_json() | ||

| if "metadata" not in req: | ||

| return get_error_argument_result(message="metadata is required") | ||

|

|

||

| # Update parser config with metadata | ||

| DocumentService.update_parser_config(doc.id, {"metadata": req["metadata"]}) | ||

|

|

||

| # Get updated document | ||

| e, doc = DocumentService.get_by_id(doc.id) | ||

| if not e: | ||

| return get_data_error_result(message="Document not found!") | ||

|

|

||

| return get_result(data=doc.to_dict()) |

There was a problem hiding this comment.

🛠️ Refactor suggestion | 🟠 Major

Add logging and error handling to the new metadata-config flow.

This new handler has no logging calls and no try/except around DocumentService.update_parser_config, which can raise (e.g., LookupError from the underlying service). An unhandled exception here will surface as a 500 with no structured log context. Please add info/warning/error logs along the happy path and failure branches, and wrap the service call in a try/except consistent with update_document / delete_documents in this file.

As per coding guidelines: "Add logging for new flows".

| # Verify ownership and existence of dataset | ||

| if not KnowledgebaseService.query(id=dataset_id, tenant_id=tenant_id): | ||

| return get_error_data_result(message="You don't own the dataset.") | ||

|

|

||

| # Verify document exists in the dataset | ||

| doc = DocumentService.query(id=document_id, kb_id=dataset_id) | ||

| if not doc: | ||

| return get_error_data_result( | ||

| message=f"Document {document_id} not found in dataset {dataset_id}" | ||

| ) | ||

| doc = doc[0] | ||

|

|

||

| # Get request body | ||

| req = await get_request_json() | ||

| if "metadata" not in req: | ||

| return get_error_argument_result(message="metadata is required") |

There was a problem hiding this comment.

Permission check inconsistency and missing null guard on request body.

Two concerns in this handler:

- Ownership is checked via

KnowledgebaseService.query(id=dataset_id, tenant_id=tenant_id), but other handlers in this file (e.g.,list_docs,metadata_summary,delete_documents) useKnowledgebaseService.accessible(kb_id=dataset_id, user_id=tenant_id). Thequery(...)variant only matches datasets whosetenant_idcolumn equals the caller's tenant, so team-shared datasets that are reachable viaaccessible()will be rejected here with "You don't own the dataset." Please align with the rest of the file unless the stricter check is intentional. req = await get_request_json()followed byif "metadata" not in reqwill raiseTypeErrorif the body is empty / not a JSON object (returnsNone). Guard against that before the key check.

🛠️ Suggested changes

- # Verify ownership and existence of dataset

- if not KnowledgebaseService.query(id=dataset_id, tenant_id=tenant_id):

- return get_error_data_result(message="You don't own the dataset.")

+ # Verify ownership and existence of dataset

+ if not KnowledgebaseService.accessible(kb_id=dataset_id, user_id=tenant_id):

+ return get_error_data_result(message=f"You don't own the dataset {dataset_id}. ")

@@

- # Get request body

- req = await get_request_json()

- if "metadata" not in req:

- return get_error_argument_result(message="metadata is required")

+ # Get request body

+ req = await get_request_json() or {}

+ if not isinstance(req, dict) or "metadata" not in req:

+ return get_error_argument_result(message="metadata is required")#!/bin/bash

# Confirm which permission helper is used across restful_apis handlers.

rg -nP "KnowledgebaseService\.(accessible|query)\(" --type=py -g '!**/test/**'🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@api/apps/restful_apis/document_api.py` around lines 855 - 870, Replace the

ownership check that calls KnowledgebaseService.query(...) with the same

permission helper used elsewhere:

KnowledgebaseService.accessible(kb_id=dataset_id, user_id=tenant_id) so

team-shared datasets are allowed; then, after calling req = await

get_request_json(), guard against a None/non-dict body (e.g., if not req or not

isinstance(req, dict): return get_error_argument_result(message="metadata is

required")) before checking "metadata" in req; keep the existing

DocumentService.query(id=document_id, kb_id=dataset_id) and doc = doc[0] logic

intact.

| class DeleteDatasetReq(DeleteReq): ... | ||

|

|

||

|

|

||

| class DeleteDocumentReq(DeleteReq): ... |

There was a problem hiding this comment.

Validate empty and conflicting document-delete targets.

DeleteDocumentReq currently accepts {}, {"ids": []}, and {"delete_all": true, "ids": [...]}. For a destructive API, reject missing targets and ambiguous delete_all + ids payloads at the schema boundary.

Proposed fix

-class DeleteDocumentReq(DeleteReq): ...

+class DeleteDocumentReq(DeleteReq):

+ `@model_validator`(mode="after")

+ def validate_delete_target(self) -> "DeleteDocumentReq":

+ if self.delete_all and self.ids:

+ raise PydanticCustomError(

+ "mutually_exclusive",

+ "`ids` and `delete_all` cannot be used together",

+ )

+ if not self.delete_all and not self.ids:

+ raise PydanticCustomError(

+ "missing_delete_target",

+ "Either `ids` or `delete_all=true` is required",

+ )

+ return self🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@api/utils/validation_utils.py` at line 821, DeleteDocumentReq currently

allows empty targets and ambiguous payloads; update the DeleteDocumentReq schema

(class DeleteDocumentReq that inherits DeleteReq) to add validation that rejects

requests where both ids is missing/empty and delete_all is not true, and also

rejects requests where delete_all is true while ids is provided (non-empty).

Implement this as a schema-level validator (e.g., a Pydantic root_validator or

equivalent) on DeleteDocumentReq that raises a validation error with a clear

message when (1) neither ids nor delete_all specify a target, (2) ids is an

empty list, or (3) delete_all is true and ids is present, ensuring the API

rejects missing or conflicting delete targets at the schema boundary.

| const { id: datasetId } = useParams(); | ||

| const { | ||

| data, | ||

| isPending: loading, | ||

| mutateAsync, | ||

| } = useMutation({ | ||

| mutationKey: [DocumentApiAction.RemoveDocument], | ||

| mutationFn: async (documentIds: string | string[]) => { | ||

| const { data } = await kbService.documentRm({ doc_id: documentIds }); | ||

| const ids = Array.isArray(documentIds) ? documentIds : [documentIds]; | ||

| const { data } = await deleteDocument(datasetId!, ids); |

There was a problem hiding this comment.

Use the same dataset-id fallback as the document list.

useFetchDocumentList supports knowledgeId || id, but deletion only uses route id. In query-param driven views this sends deleteDocument(undefined, ids) and hits the wrong endpoint.

Proposed fix

- const { id: datasetId } = useParams();

+ const { id: routeDatasetId } = useParams();

+ const { knowledgeId } = useGetKnowledgeSearchParams();

...

const ids = Array.isArray(documentIds) ? documentIds : [documentIds];

- const { data } = await deleteDocument(datasetId!, ids);

+ const datasetId = routeDatasetId || knowledgeId;

+ if (!datasetId) {

+ message.error('Dataset ID is required');

+ return 500;

+ }

+ const { data } = await deleteDocument(datasetId, ids);🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@web/src/hooks/use-document-request.ts` around lines 319 - 328, The deletion

path only uses const { id: datasetId } = useParams() which drops the query-param

driven fallback; update the params destructuring to include the alternate key

(e.g., const { id, knowledgeId } = useParams()) and compute datasetId =

knowledgeId || id, then pass that datasetId into deleteDocument inside the

useMutation mutationFn (which references deleteDocument and mutateAsync) so it

uses the same knowledgeId||id fallback as useFetchDocumentList.

Codecov Report✅ All modified and coverable lines are covered by tests. Additional details and impacted files@@ Coverage Diff @@

## main #14273 +/- ##

==========================================

- Coverage 96.66% 95.97% -0.70%

==========================================

Files 10 10

Lines 690 695 +5

Branches 108 111 +3

==========================================

Hits 667 667

- Misses 8 11 +3

- Partials 15 17 +2 ☔ View full report in Codecov by Sentry. 🚀 New features to boost your workflow:

|

There was a problem hiding this comment.

Actionable comments posted: 18

Caution

Some comments are outside the diff and can’t be posted inline due to platform limitations.

⚠️ Outside diff range comments (4)

test/testcases/test_web_api/test_document_app/test_document_metadata.py (1)

177-180:⚠️ Potential issue | 🟠 MajorPass a dataset ID to

document_infoshere too.In

test/testcases/test_web_api/test_common.py:397-399, the second positional argument isdataset_id; this test currently passes the params dict in that slot, so the URL is built with the dict as the dataset ID and noidsfilter is sent.Proposed fix

- def test_infos_invalid_doc_id(self, WebApiAuth): - res = document_infos(WebApiAuth, {"doc_ids": ["invalid_id"]}) + def test_infos_invalid_doc_id(self, WebApiAuth, add_dataset_func): + dataset_id = add_dataset_func + res = document_infos(WebApiAuth, dataset_id, {"ids": ["invalid_id"]})Based on learnings, Applies to tests/**/*.py : Add/adjust tests for behavior changes.

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@test/testcases/test_web_api/test_document_app/test_document_metadata.py` around lines 177 - 180, The test test_infos_invalid_doc_id calls document_infos(WebApiAuth, {"doc_ids": ["invalid_id"]}) but passes the params dict as the dataset_id slot; change the call so the second argument is the dataset_id and the params dict is passed as the third argument (i.e., call document_infos with WebApiAuth, dataset_id, {"doc_ids": ["invalid_id"]}); update the call site in test_infos_invalid_doc_id to use the proper dataset identifier used elsewhere in tests (same value used in test_common where document_infos is called) so the URL and ids filter are built correctly.sdk/python/test/test_frontend_api/test_chunk.py (1)

56-66:⚠️ Potential issue | 🔴 CriticalPolling loop is broken by the new response shape.

The dataset-scoped

GET /api/v1/datasets/{dataset_id}/documentsreturns{"data": {"total": N, "docs": [...]}}, whereas the old/v1/document/infosreturned{"data": [...]}. The loopfor doc_info in res['data']now iterates the dict's keys ('total','docs') rather than document objects, sodoc_info['progress']will raiseTypeError, andfinished_countwill never reachdoc_count— the test will hang until the outer timeout.🛠️ Proposed fix

- res = get_docs_info(get_auth, dataset_id, doc_ids=doc_id_list) + res = get_docs_info(get_auth, dataset_id, doc_ids=doc_id_list) finished_count = 0 - for doc_info in res['data']: + for doc_info in res['data']['docs']: if doc_info['progress'] == 1: finished_count += 1🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@sdk/python/test/test_frontend_api/test_chunk.py` around lines 56 - 66, The polling loop in the test breaks because get_docs_info now returns data as {"total": N, "docs": [...]}; update the loop that inspects res from get_docs_info(get_auth, dataset_id, doc_ids=doc_id_list) so it iterates the document list (use docs = res['data']['docs'] when res['data'] is a dict with 'docs', otherwise fallback to res['data'] for backward compatibility), then loop over docs and check doc_info['progress'] to count finished documents (symbols to edit: get_docs_info call, res variable handling, the for doc_info in ... loop, finished_count and progress check).sdk/python/ragflow_sdk/modules/dataset.py (1)

83-98:⚠️ Potential issue | 🔴 Critical

params.append(...)on adictwill raiseAttributeError—ids=path is broken in the public SDK.

paramsis constructed as a dict literal on lines 83–93, but whenidsis provided, line 97 callsparams.append((...)), whichdictdoes not support. Any caller passingids=[...]toDataSet.list_documentswill hitAttributeError: 'dict' object has no attribute 'append'. To send multiple same-key query parameters (ids=a&ids=b&...) as the backend expects inapi/apps/restful_apis/document_api.py::_get_docs_with_request(q.getlist("ids")), convertparamsto a list of tuples.🛠️ Proposed fix

- params = { - "id": id, - "name": name, - "keywords": keywords, - "page": page, - "page_size": page_size, - "orderby": orderby, - "desc": desc, - "create_time_from": create_time_from, - "create_time_to": create_time_to, - } - # Handle ids parameter - convert to multiple query params - if ids: - for doc_id in ids: - params.append(("ids", doc_id)) + params: list[tuple[str, Any]] = [ + ("id", id), + ("name", name), + ("keywords", keywords), + ("page", page), + ("page_size", page_size), + ("orderby", orderby), + ("desc", desc), + ("create_time_from", create_time_from), + ("create_time_to", create_time_to), + ] + # Handle ids parameter - append one entry per id + if ids: + for doc_id in ids: + params.append(("ids", doc_id))Consider adding an SDK unit test that exercises the

ids=[...]path to prevent regressions.🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@sdk/python/ragflow_sdk/modules/dataset.py` around lines 83 - 98, The params dict construction in DataSet.list_documents uses params.append when ids is present which fails; change params to be a list of (key,value) tuples (or convert the existing dict into a list of tuples) before appending multiple ("ids", doc_id) entries, then pass that list to self.get(f"/datasets/{self.id}/documents", params=params); update the handling around the params variable and ensure the ids loop uses params.append(("ids", doc_id)); add a unit test that calls list_documents with ids=[...] to cover this path.internal/cli/user_command.go (1)

19-27:⚠️ Potential issue | 🟡 MinorEscape provider and instance names before building the route.

Line 1254 interpolates user-supplied path segments directly. Instance names containing

/,?,#, or spaces can route to the wrong endpoint or fail before reaching the server.🐛 Proposed fix

import ( "bufio" "context" "encoding/json" "fmt" + neturl "net/url" "os" ce "ragflow/internal/cli/contextengine" "strings" )- url := fmt.Sprintf("/providers/%s/instances/%s/balance", providerName, instanceName) + url := fmt.Sprintf( + "/providers/%s/instances/%s/balance", + neturl.PathEscape(providerName), + neturl.PathEscape(instanceName), + )Also applies to: 1254-1254

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@internal/cli/user_command.go` around lines 19 - 27, The code interpolates user-supplied provider and instance names directly into a route (causing broken routing for values with "/", "?", "#", or spaces); update the route construction in the user command handler (e.g., where provider and instance variables are combined into the route/path in the function handling the user command, such as handleUserCommand or the code that sets the route variable) to escape path segments using net/url.PathEscape (call PathEscape(provider) and PathEscape(instance) before appending or fmt.Sprintf into the route). Ensure any other concatenations that build URL paths from provider or instance use the escaped values so path traversal and routing errors are prevented.

🧹 Nitpick comments (3)

docs/develop/deepwiki.md (1)

16-16: Consider addressing style suggestions from static analysis.Static analysis tools flagged a few minor style issues that could improve readability:

- Line 16: "may lag behind" → consider "may lag"

- Line 35: Repetitive "who want to" phrasing in nearby sentences

- Line 54: "making changes" → consider "changing" or "modifying"

- Line 64: "lagging behind" → consider "lagging"

These are optional refinements and don't affect functionality.

Also applies to: 35-35, 54-54, 64-64

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@docs/develop/deepwiki.md` at line 16, Replace the flagged phrasing in the DeepWiki doc: change "may lag behind" to "may lag", remove or rephrase the repetitive "who want to" phrasing around the sentence that repeats it, replace "making changes" with "changing" or "modifying", and change "lagging behind" to "lagging" for consistency; update the affected sentences to maintain natural flow and run the static checker to confirm no remaining style warnings.internal/service/model_service.go (1)

478-493: Remove the unreachable commented-out implementation.This stale block sits after the successful return path and makes the new balance flow harder to audit.

🧹 Proposed cleanup

- // convert instance.Extra (json string) to map - //var extra map[string]string - //err = json.Unmarshal([]byte(instance.Extra), &extra) - //if err != nil { - // return nil, common.CodeServerError, err - //} - // - //result := map[string]interface{}{ - // "id": instance.ID, - // "instanceName": instance.InstanceName, - // "providerID": instance.ProviderID, - // "status": instance.Status, - // "region": extra["region"], - //} - // - //return result, common.CodeSuccess, nil🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@internal/service/model_service.go` around lines 478 - 493, Remove the stale commented-out JSON parsing and result-construction block (the code that unmarshals instance.Extra into extra and builds result with id, instanceName, providerID, status, region) that appears after the successful return path; delete those commented lines so the function no longer contains unreachable legacy code and the new balance flow in model_service.go is easier to audit.test/testcases/test_web_api/test_search_app/test_search_routes_unit.py (1)

212-219:async_askstub yields nothing, hiding data-shape regressions.The real

async_askyields dicts shaped like{"answer": str, "reference": dict, "final": bool, ...}.if False: yield Nonemakes the stub a valid async generator but emits nothing, so any future test won't catch regressions in howcompletionserializes yielded items viajson.dumps({"code":0,"message":"","data":ans}, ...). Consider yielding at least one realistic payload so serialization and frame ordering (streamed payload → terminaldata: True) are actually verified.🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@test/testcases/test_web_api/test_search_app/test_search_routes_unit.py` around lines 212 - 219, The stubbed async generator async_ask currently yields nothing, hiding serialization and framing regressions; replace its body so it yields at least one realistic payload matching the real contract (e.g., a dict with keys like "answer": str, "reference": dict, "final": bool and any other expected fields) before completing so the test exercises json.dumps({"code":0,"message":"","data":ans}, ...) and the streamed payload → terminal `data: True` ordering; update the stubbed function _async_ask in the test to yield that realistic dict from ModuleType("api.db.services.dialog_service").async_ask.

🤖 Prompt for all review comments with AI agents

Verify each finding against the current code and only fix it if needed.

Inline comments:

In `@api/apps/restful_apis/chat_api.py`:

- Around line 154-167: The helper _create_session_for_completion currently

ignores the return value of ConversationService.save and can silently continue

on failure; update _create_session_for_completion to capture and check the

result of ConversationService.save(**conv) and if it indicates failure, raise a

clear error (or return an error tuple) instead of proceeding to get_by_id;

mirror the behavior used in create_session (use the same success/failure check

and error handling pattern) and use a more specific exception/message (e.g.,

SessionCreationError or a descriptive LookupError message) so callers can

distinguish a save failure from a missing lookup.

- Around line 1040-1068: When chat_id is absent the dialog created by

_build_default_completion_dialog has llm_id="" and chat_model_id can be empty,

causing async_chat(dia, ...) to be called with an invalid model; change the

branch where chat_id is falsy to resolve a valid LLM id: fetch tenant default

LLM (or require req["llm_id"] if preferred) and set dia.llm_setting/llm_id

accordingly before calling async_chat, and add logging.info calls to (1) the

"direct completion" path where dia is created and the chosen llm_id/model is

recorded, and (2) inside _create_session_for_completion to log when a session is

auto-created and which model/tenant_llm_id was used; update references in this

branch to use chat_model_id or the tenant default consistently so async_chat

always receives a non-empty model id.

In `@api/apps/restful_apis/document_api.py`:

- Around line 531-535: In _get_docs_with_request: move the mutual-exclusive

check for doc_id and doc_ids so it runs before the doc_id ownership DB query

(i.e., place the doc_id/doc_ids conflict check before the block using doc_id),

and make the error return match the function's 4-tuple contract (return

RetCode.DATA_ERROR, "<message>", [], 0). Also, when doc_ids is provided and

doc_ids_filter already exists from _parse_doc_id_filter_with_metadata, intersect

doc_ids_filter with doc_ids (set intersection) instead of unconditionally

assigning doc_ids to preserve metadata filtering.

In `@api/apps/restful_apis/search_api.py`:

- Around line 176-215: The completion handler currently passes current_user.id

(uid) into async_ask and only yields exceptions to the client; update it to

derive tenant_id from the found SearchService.get_detail(search_id) (use

search_app.get("tenant_id", current_user.id)) and pass that tenant_id to

async_ask instead of uid to match the mindmap handler, and inside the async

stream() except block add a server-side log call (logging.exception(ex)) before

yielding the error to the client; leave the existing accessible4deletion check

in place but do not change its name here.

In `@docs/develop/deepwiki.md`:

- Line 64: Add a short, actionable step-by-step instruction to the sentence

about manual re-indexing on the DeepWiki page: specify where to click (e.g.,

open the DeepWiki page for the repository, click the "Settings" or "Actions"

menu, then select "Re-index" or "Trigger re-index"), mention any confirmation

needed and expected background processing time, and optionally add a link or

pointer to the DeepWiki UI help page so users can follow exact UI labels.

- Line 11: The intro sentence currently calls the knowledge base

"always-up-to-date" which contradicts the caution that "it may lag behind the

latest official release"; update the opening line (the phrase

"always-up-to-date") to a reconciled, accurate wording such as "AI-generated,

regularly-updated knowledge base" or "AI-generated, frequently-updated knowledge

base (may lag behind the latest release)" and ensure the phrasing in the initial

description and the caution sentence ("it may lag behind the latest official

release") are consistent so readers are not given conflicting expectations.

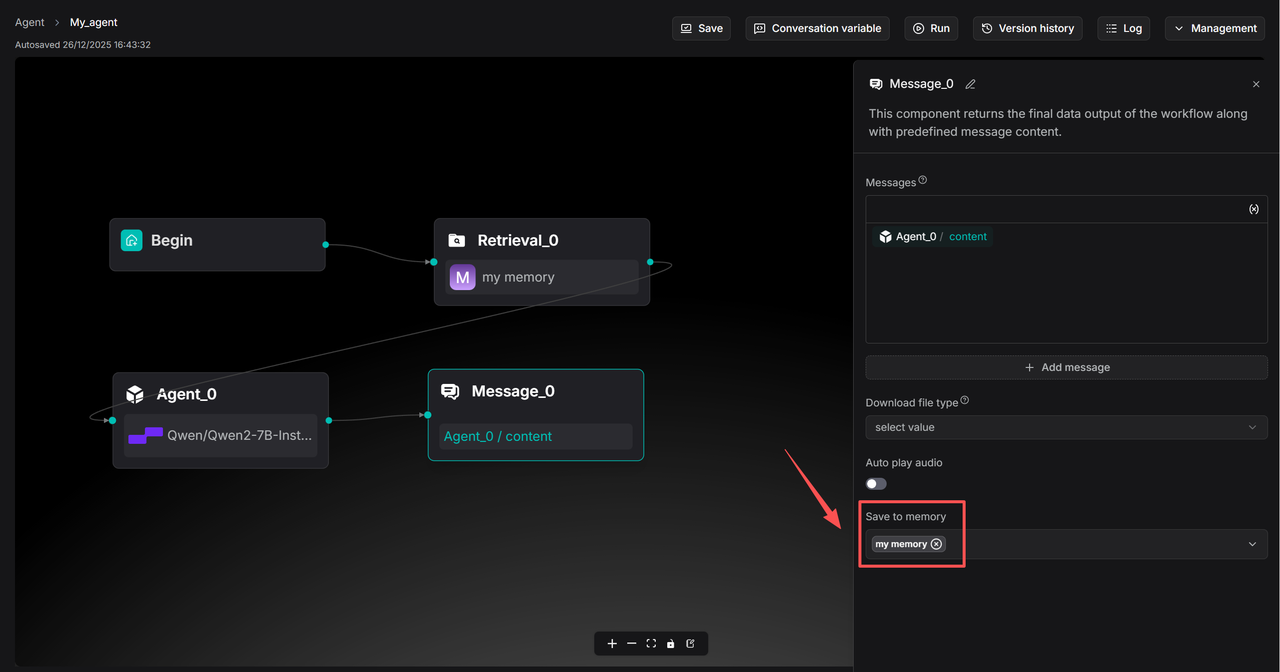

In `@docs/guides/agent/agent_component_reference/message.md`:

- Line 33: The image markdown lacks alt text which triggers MD045; update the

image tag on the line containing

to include a concise descriptive alt string (e.g., "Save to memory dialog

screenshot" or similar) so the tag becomes  to satisfy

linting and accessibility.

- Around line 35-41: Update the "User ID" section to explicitly state what the

User ID field stores (it persists the literal string provided, or an empty

string if left blank), explain that variable references are only resolved when

wrapped in braces (e.g., {sys.user_id}), and give examples showing both a fixed

ID (e.g., "alice123") and a dynamic binding ({sys.user_id}) so readers know how

to bind identities and that the toggle alone does not populate or scope memories

without a value.

In `@docs/guides/agent/agent_component_reference/retrieval.mdx`:

- Around line 84-86: The docs incorrectly present "User ID" as an alternative to

selecting Memory; update the text so it clarifies that selecting Memory (i.e.,

providing memory_ids) is still required and that user_id only filters messages

within those selected memories, and also fix the Empty response warning to state

that memory retrieval uses empty_response when no messages match the provided

memory_ids/user_id filter. Specifically, replace the alternative-bullets for

"Memory" and "User ID" with a single explanation referencing memory_ids and

user_id (e.g., "Memory: must select existing memories via memory_ids; user_id

may optionally be provided to filter messages within those memories"), and

adjust the paragraph mentioning empty_response to say it applies when no

messages match the selected memory_ids (after applying the optional user_id

filter).

In `@docs/guides/chat/set_chat_variables.md`:

- Around line 73-90: Update the fenced JSON code block's stale line-highlight

annotation: change the opening fence from ```json {9} to ```json {15} so the

highlight targets the "style":"hilarious" line; ensure the block still uses the

same curl payload and that the quoted key "style":"hilarious" is the highlighted

line in the docs file (reference the code block containing "style":"hilarious",

"messages", and other payload fields).

In `@docs/references/http_api_reference.md`:

- Around line 7970-7972: The docs claim the request-body field "kb_ids" is used

as a fallback, but the handler in api/apps/restful_apis/search_api.py (the

search endpoint logic around lines where

SearchService.get_search_kb_ids(search_id) is called) never reads req["kb_ids"];

update the code or docs: either remove "kb_ids" from the API docs (and any

duplicated mention at the other location) or implement the server-side fallback

by modifying the search handler to check req["kb_ids"] when

SearchService.get_search_kb_ids(search_id) returns empty/None (e.g., call

SearchService.get_search_kb_ids first, and if no IDs, use req["kb_ids"] before

proceeding to search). Ensure the change references

SearchService.get_search_kb_ids and the request parsing that currently ignores

req["kb_ids"].

In `@internal/cli/user_parser.go`:

- Around line 1306-1343: The parseShowBalance function has an extra

p.nextToken() that advances past the semicolon/EOF and mis-syncs the parser:

remove the redundant p.nextToken() call after parsing instanceName (keep the

single p.nextToken() immediately after parseQuotedString() that advances past

the quoted string), so the subsequent semicolon check inspects the correct

token; also update the inline comment that currently reads "// consume INSTANCE"

to reflect that this path was dispatched from TokenBalance (e.g. "// consume

BALANCE") and correct the error text returned by the provider-name check (change

"expected provider name after FROM PROVIDER" to something like "expected

provider name after FROM") so messages match the implemented grammar in

parseShowBalance, leaving NewCommand("show_instance_balance") and param

assignments unchanged.

In `@internal/entity/models/moonshot.go`:

- Around line 116-121: The parsing of Moonshot responses currently uses

unchecked type assertions on result["data"] and modelMap["id"], which can panic;

update the code around the models slice construction (the block that creates

models := make([]string, 0) and iterates over result["data"].([]interface{}))

and the similar code at the later block (lines ~173-174) to defensively validate

types and existence: check that result["data"] exists and is a []interface{}

(use an ok-style type assertion), for each item assert it's a

map[string]interface{} before accessing "id", verify modelMap["id"] is a string

before appending, and return a clear parse error (not panic) if any check fails

so the service can respond cleanly.

In `@sdk/python/test/test_frontend_api/common.py`:

- Around line 91-107: The params variable is a dict but the code calls

params.append(...) which raises AttributeError; change params to be a list of

tuples (e.g., params = []) so you can append query pairs for both branches,

rename the loop variable to avoid shadowing builtin (use doc_id_item or similar

instead of id), and for the single-id branch append ("id", doc_id) to the same

params list; keep the final requests.get(url=..., headers=authorization,

params=params) call as-is since requests accepts a list of tuples for query

parameters.

In `@test/testcases/test_http_api/common.py`:

- Around line 413-419: The helper currently sends POST to the chat completions

endpoint using the payload variable but does not set a default for streaming;

because streaming is now the default callers that omit "stream" may get SSE and

fail JSON parsing. Before calling requests.post (the call that uses url,

headers, auth, json=payload), ensure the helper sets

payload.setdefault("stream", False) (or otherwise injects "stream": False into

payload when not provided) so non-streaming JSON responses are returned by

default; make this change near where payload is created/modified (the payload

variable and just before the requests.post call).

In `@test/testcases/test_web_api/test_document_app/test_document_metadata.py`:

- Line 47: The test call to document_infos uses the deprecated request key

"doc_ids" which masks auth-only failure behavior; update the call in the

document_infos(invalid_auth, "kb_id", {"doc_ids": ["doc_id"]}) invocation to use

the migrated key {"ids": ["doc_id"]} so the request shape matches the new

dataset-scoped API and the test isolates the auth-negative path; ensure no other

parameters are changed and run the test to confirm it still asserts the auth

failure.

In `@test/testcases/test_web_api/test_search_app/test_search_routes_unit.py`:

- Around line 43-48: The _StubResponse class uses a plain dict for headers which

lacks add_header and breaks tests for search_api.completion; update

_StubResponse to use an object that supports add_header (e.g., mimic

werkzeug.wrappers.Response.headers or implement a small Headers stub with

add_header and a dict backstore) so calls like resp.headers.add_header(...)

succeed, and ensure async_ask mock remains compatible with completion tests;

locate the _StubResponse definition in the test file and replace headers = {}

with an instance of that Headers-like stub to let completion route tests assert

the four expected headers and iterate the SSE generator.

In `@web/src/services/next-chat-service.ts`:

- Around line 79-82: Client and server disagree on the HTTP verb for the thumbup

endpoint: web/src/services/next-chat-service.ts sets method: 'patch' for the

thumbup action while api/apps/restful_apis/chat_api.py still registers the

feedback route with methods=["PUT"], causing 405s; fix by making both sides

consistent — either change the client thumbup entry (thumbup in

next-chat-service.ts) to method: 'put' to match the existing server route, or

update the server route in chat_api.py (the feedback handler that currently has

methods=["PUT"]) to accept "PATCH" (or ["PUT","PATCH"] to be permissive); update

tests or callers accordingly and ensure the handler logic still works for PATCH

semantics if you choose to migrate the server.

---

Outside diff comments:

In `@internal/cli/user_command.go`:

- Around line 19-27: The code interpolates user-supplied provider and instance

names directly into a route (causing broken routing for values with "/", "?",

"#", or spaces); update the route construction in the user command handler

(e.g., where provider and instance variables are combined into the route/path in

the function handling the user command, such as handleUserCommand or the code

that sets the route variable) to escape path segments using net/url.PathEscape

(call PathEscape(provider) and PathEscape(instance) before appending or

fmt.Sprintf into the route). Ensure any other concatenations that build URL

paths from provider or instance use the escaped values so path traversal and

routing errors are prevented.

In `@sdk/python/ragflow_sdk/modules/dataset.py`:

- Around line 83-98: The params dict construction in DataSet.list_documents uses

params.append when ids is present which fails; change params to be a list of

(key,value) tuples (or convert the existing dict into a list of tuples) before

appending multiple ("ids", doc_id) entries, then pass that list to

self.get(f"/datasets/{self.id}/documents", params=params); update the handling

around the params variable and ensure the ids loop uses params.append(("ids",

doc_id)); add a unit test that calls list_documents with ids=[...] to cover this

path.

In `@sdk/python/test/test_frontend_api/test_chunk.py`:

- Around line 56-66: The polling loop in the test breaks because get_docs_info

now returns data as {"total": N, "docs": [...]}; update the loop that inspects

res from get_docs_info(get_auth, dataset_id, doc_ids=doc_id_list) so it iterates

the document list (use docs = res['data']['docs'] when res['data'] is a dict

with 'docs', otherwise fallback to res['data'] for backward compatibility), then

loop over docs and check doc_info['progress'] to count finished documents

(symbols to edit: get_docs_info call, res variable handling, the for doc_info in

... loop, finished_count and progress check).

In `@test/testcases/test_web_api/test_document_app/test_document_metadata.py`:

- Around line 177-180: The test test_infos_invalid_doc_id calls

document_infos(WebApiAuth, {"doc_ids": ["invalid_id"]}) but passes the params

dict as the dataset_id slot; change the call so the second argument is the

dataset_id and the params dict is passed as the third argument (i.e., call

document_infos with WebApiAuth, dataset_id, {"doc_ids": ["invalid_id"]}); update

the call site in test_infos_invalid_doc_id to use the proper dataset identifier

used elsewhere in tests (same value used in test_common where document_infos is

called) so the URL and ids filter are built correctly.

---

Nitpick comments:

In `@docs/develop/deepwiki.md`:

- Line 16: Replace the flagged phrasing in the DeepWiki doc: change "may lag

behind" to "may lag", remove or rephrase the repetitive "who want to" phrasing

around the sentence that repeats it, replace "making changes" with "changing" or

"modifying", and change "lagging behind" to "lagging" for consistency; update

the affected sentences to maintain natural flow and run the static checker to

confirm no remaining style warnings.

In `@internal/service/model_service.go`:

- Around line 478-493: Remove the stale commented-out JSON parsing and

result-construction block (the code that unmarshals instance.Extra into extra

and builds result with id, instanceName, providerID, status, region) that

appears after the successful return path; delete those commented lines so the

function no longer contains unreachable legacy code and the new balance flow in

model_service.go is easier to audit.

In `@test/testcases/test_web_api/test_search_app/test_search_routes_unit.py`:

- Around line 212-219: The stubbed async generator async_ask currently yields

nothing, hiding serialization and framing regressions; replace its body so it

yields at least one realistic payload matching the real contract (e.g., a dict

with keys like "answer": str, "reference": dict, "final": bool and any other

expected fields) before completing so the test exercises

json.dumps({"code":0,"message":"","data":ans}, ...) and the streamed payload →

terminal `data: True` ordering; update the stubbed function _async_ask in the

test to yield that realistic dict from

ModuleType("api.db.services.dialog_service").async_ask.

🪄 Autofix (Beta)

Fix all unresolved CodeRabbit comments on this PR:

- Push a commit to this branch (recommended)

- Create a new PR with the fixes

ℹ️ Review info

⚙️ Run configuration

Configuration used: Organization UI

Review profile: CHILL

Plan: Pro

Run ID: 6e6017c7-0791-48ca-91ea-11974aed995b

⛔ Files ignored due to path filters (1)

uv.lockis excluded by!**/*.lock

📒 Files selected for processing (40)

api/apps/document_app.pyapi/apps/restful_apis/chat_api.pyapi/apps/restful_apis/document_api.pyapi/apps/restful_apis/search_api.pydocs/develop/deepwiki.mddocs/guides/agent/agent_component_reference/message.mddocs/guides/agent/agent_component_reference/retrieval.mdxdocs/guides/chat/set_chat_variables.mddocs/references/http_api_reference.mddocs/release_notes.mdinternal/cli/client.gointernal/cli/lexer.gointernal/cli/types.gointernal/cli/user_command.gointernal/cli/user_parser.gointernal/entity/models/deepseek.gointernal/entity/models/dummy.gointernal/entity/models/factory.gointernal/entity/models/moonshot.gointernal/entity/models/types.gointernal/entity/models/zhipu-ai.gointernal/handler/providers.gointernal/router/router.gointernal/service/model_service.gosdk/python/ragflow_sdk/modules/dataset.pysdk/python/ragflow_sdk/modules/session.pysdk/python/test/test_frontend_api/common.pysdk/python/test/test_frontend_api/test_chunk.pytest/testcases/test_http_api/common.pytest/testcases/test_http_api/test_session_management/test_chat_completions.pytest/testcases/test_web_api/test_common.pytest/testcases/test_web_api/test_document_app/test_document_metadata.pytest/testcases/test_web_api/test_search_app/test_search_routes_unit.pyweb/src/hooks/logic-hooks.tsweb/src/pages/next-chats/hooks/use-send-chat-message.tsweb/src/pages/next-chats/hooks/use-send-single-message.tsweb/src/pages/next-search/hooks.tsweb/src/services/knowledge-service.tsweb/src/services/next-chat-service.tsweb/src/utils/api.ts

💤 Files with no reviewable changes (1)

- api/apps/document_app.py

✅ Files skipped from review due to trivial changes (2)

- internal/cli/types.go

- docs/release_notes.md

🚧 Files skipped from review as they are similar to previous changes (2)

- test/testcases/test_web_api/test_common.py

- web/src/utils/api.ts

| def _create_session_for_completion(chat_id, dialog, user_id): | ||

| conv = { | ||

| "id": get_uuid(), | ||

| "dialog_id": chat_id, | ||

| "name": "New session", | ||

| "message": [{"role": "assistant", "content": dialog.prompt_config.get("prologue", "")}], | ||

| "user_id": user_id, | ||

| "reference": [], | ||

| } | ||

| ConversationService.save(**conv) | ||

| ok, conv_obj = ConversationService.get_by_id(conv["id"]) | ||

| if not ok: | ||

| raise LookupError("Fail to create a session!") | ||

| return conv_obj |

There was a problem hiding this comment.

Silent failure if ConversationService.save returns falsy.

_create_session_for_completion calls ConversationService.save(**conv) but never inspects the return value — if save fails but get_by_id still resolves some stale row (or partially), the caller only notices via the subsequent lookup. Mirror create_session at Line 657-660:

🛠️ Suggested

- ConversationService.save(**conv)

- ok, conv_obj = ConversationService.get_by_id(conv["id"])

- if not ok:

- raise LookupError("Fail to create a session!")

+ if not ConversationService.save(**conv):

+ raise LookupError("Fail to save a session!")

+ ok, conv_obj = ConversationService.get_by_id(conv["id"])

+ if not ok:

+ raise LookupError("Fail to create a session!")

+ logging.info("Created completion session %s for chat %s", conv["id"], chat_id)

return conv_objAlso note LookupError raised here propagates to the outer except Exception at Line 1119 and becomes a generic 500 — acceptable, but consider a dedicated error code/message since the caller reached this point with a valid, owned chat.

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@api/apps/restful_apis/chat_api.py` around lines 154 - 167, The helper

_create_session_for_completion currently ignores the return value of

ConversationService.save and can silently continue on failure; update

_create_session_for_completion to capture and check the result of

ConversationService.save(**conv) and if it indicates failure, raise a clear

error (or return an error tuple) instead of proceeding to get_by_id; mirror the

behavior used in create_session (use the same success/failure check and error

handling pattern) and use a more specific exception/message (e.g.,

SessionCreationError or a descriptive LookupError message) so callers can

distinguish a save failure from a missing lookup.

| try: | ||

| e, conv = ConversationService.get_by_id(session_id) | ||

| if not e: | ||

| return get_data_error_result(message="Session not found!") | ||

| if conv.dialog_id != chat_id: | ||

| return get_data_error_result(message="Session does not belong to this chat!") | ||

| conv.message = deepcopy(req["messages"]) | ||

| e, dia = DialogService.get_by_id(chat_id) | ||

| if not e: | ||

| return get_data_error_result(message="Chat not found!") | ||

| conv = None | ||

| if session_id and not chat_id: | ||

| return get_data_error_result(message="`chat_id` is required when `session_id` is provided.") | ||

|

|

||

| if chat_id: | ||

| if not _ensure_owned_chat(chat_id): | ||

| return get_json_result( | ||

| data=False, | ||

| message="No authorization.", | ||

| code=RetCode.AUTHENTICATION_ERROR, | ||

| ) | ||

| e, dia = DialogService.get_by_id(chat_id) | ||

| if not e: | ||

| return get_data_error_result(message="Chat not found!") | ||

| if session_id: | ||

| e, conv = ConversationService.get_by_id(session_id) | ||

| if not e: | ||

| return get_data_error_result(message="Session not found!") | ||

| if conv.dialog_id != chat_id: | ||

| return get_data_error_result(message="Session does not belong to this chat!") | ||

| else: | ||

| conv = _create_session_for_completion(chat_id, dia, req.get("user_id", current_user.id)) | ||

| session_id = conv.id | ||

| conv.message = deepcopy(req["messages"]) | ||

| else: | ||

| dia = _build_default_completion_dialog() | ||

| dia.llm_setting = chat_model_config | ||

|

|

There was a problem hiding this comment.

No LLM fallback when chat_id is absent — async_chat will be invoked with llm_id="".

In the new "direct completions" branch (chat_id missing), dia = _build_default_completion_dialog() sets llm_id="" and tenant_llm_id=None. If the caller also omits llm_id from the body, chat_model_id at Line 1032 is "", the if chat_model_id: block at Line 1077 is skipped, and async_chat(dia, ...) will run with an empty model id. That will either 500 deep inside LLMBundle or silently pick the wrong model.

Please either require llm_id in the body when chat_id is not provided, or fall back to the tenant default:

🛠️ Suggested fallback

else:

dia = _build_default_completion_dialog()

dia.llm_setting = chat_model_config

+ if not chat_model_id:

+ default_chat = get_tenant_default_model_by_type(current_user.id, LLMType.CHAT)

+ dia.llm_id = getattr(default_chat, "llm_name", "") or default_chat.get("llm_name", "")Per coding guidelines ("Add logging for new flows"), please also add a logging.info(...) around this new direct-completion path and inside _create_session_for_completion so the two newly introduced flows are observable.

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@api/apps/restful_apis/chat_api.py` around lines 1040 - 1068, When chat_id is

absent the dialog created by _build_default_completion_dialog has llm_id="" and

chat_model_id can be empty, causing async_chat(dia, ...) to be called with an

invalid model; change the branch where chat_id is falsy to resolve a valid LLM

id: fetch tenant default LLM (or require req["llm_id"] if preferred) and set

dia.llm_setting/llm_id accordingly before calling async_chat, and add

logging.info calls to (1) the "direct completion" path where dia is created and

the chosen llm_id/model is recorded, and (2) inside

_create_session_for_completion to log when a session is auto-created and which

model/tenant_llm_id was used; update references in this branch to use

chat_model_id or the tenant default consistently so async_chat always receives a

non-empty model id.

| doc_ids = q.getlist("ids") | ||

| if doc_id and len(doc_ids) > 0: | ||

| return RetCode.DATA_ERROR, f"Should not provide both 'id':{doc_id} and 'ids'{doc_ids}" | ||

| if len(doc_ids) > 0: | ||

| doc_ids_filter = doc_ids |

There was a problem hiding this comment.

Tuple-arity mismatch — ids + id branch crashes the endpoint.

_get_docs_with_request is called as err_code, err_msg, docs, total = _get_docs_with_request(...) (line 436) and every other error return in this function yields a 4-tuple. The new branch on line 533 only returns a 2-tuple:

return RetCode.DATA_ERROR, f"Should not provide both 'id':{doc_id} and 'ids'{doc_ids}"As soon as a client passes both id and ids, unpacking at the call site raises ValueError: not enough values to unpack (expected 4, got 2) and the request fails with a 500 instead of the intended 4xx. Also, this check should happen before the doc_id block at line 524 so the "own the document" DB query isn't run when the request is already invalid.

Secondary concern: on line 535, when metadata filtering already produced a non-empty doc_ids_filter (from _parse_doc_id_filter_with_metadata), unconditionally overwriting it with doc_ids drops the metadata constraint rather than intersecting. If combining metadata + ids is supposed to AND, use set intersection; otherwise document the override.

🛠️ Proposed fix

- doc_name = q.get("name")

- doc_id = q.get("id")

- if doc_id:

- if not DocumentService.query(id=doc_id, kb_id=dataset_id):

- return RetCode.DATA_ERROR, f"You don't own the document {doc_id}.", [], 0

- doc_ids_filter = [doc_id] # id provided, ignore other filters

- if doc_name and not DocumentService.query(name=doc_name, kb_id=dataset_id):

- return RetCode.DATA_ERROR, f"You don't own the document {doc_name}.", [], 0

-

- doc_ids = q.getlist("ids")

- if doc_id and len(doc_ids) > 0:

- return RetCode.DATA_ERROR, f"Should not provide both 'id':{doc_id} and 'ids'{doc_ids}"

- if len(doc_ids) > 0:

- doc_ids_filter = doc_ids

+ doc_name = q.get("name")

+ doc_id = q.get("id")

+ doc_ids = q.getlist("ids")

+ if doc_id and len(doc_ids) > 0:

+ return RetCode.DATA_ERROR, f"Should not provide both 'id':{doc_id} and 'ids':{doc_ids}", [], 0

+ if doc_id:

+ if not DocumentService.query(id=doc_id, kb_id=dataset_id):

+ return RetCode.DATA_ERROR, f"You don't own the document {doc_id}.", [], 0

+ doc_ids_filter = [doc_id] # id provided, ignore other filters

+ if doc_name and not DocumentService.query(name=doc_name, kb_id=dataset_id):

+ return RetCode.DATA_ERROR, f"You don't own the document {doc_name}.", [], 0

+ if len(doc_ids) > 0:

+ doc_ids_filter = doc_ids # NOTE: intentionally overrides metadata-derived filter🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@api/apps/restful_apis/document_api.py` around lines 531 - 535, In

_get_docs_with_request: move the mutual-exclusive check for doc_id and doc_ids

so it runs before the doc_id ownership DB query (i.e., place the doc_id/doc_ids

conflict check before the block using doc_id), and make the error return match

the function's 4-tuple contract (return RetCode.DATA_ERROR, "<message>", [], 0).

Also, when doc_ids is provided and doc_ids_filter already exists from

_parse_doc_id_filter_with_metadata, intersect doc_ids_filter with doc_ids (set

intersection) instead of unconditionally assigning doc_ids to preserve metadata

filtering.

| @manager.route("/searches/<search_id>/completion", methods=["POST"]) # noqa: F821 | ||

| @login_required | ||

| @validate_request("question") | ||

| async def completion(search_id): | ||

| if not SearchService.accessible4deletion(search_id, current_user.id): | ||

| return get_json_result( | ||

| data=False, | ||

| message="No authorization.", | ||

| code=RetCode.AUTHENTICATION_ERROR, | ||

| ) | ||

|

|

||

| req = await get_request_json() | ||

| uid = current_user.id | ||

| search_app = SearchService.get_detail(search_id) | ||

| if not search_app: | ||

| return get_data_error_result(message=f"Cannot find search {search_id}") | ||

|

|

||

| search_config = search_app.get("search_config", {}) | ||

| kb_ids = search_config.get("kb_ids") or req.get("kb_ids") or [] | ||

| if not kb_ids: | ||

| return get_data_error_result(message="`kb_ids` is required.") | ||

|

|

||

| async def stream(): | ||

| nonlocal req, uid, kb_ids, search_config | ||

| try: | ||

| async for ans in async_ask(req["question"], kb_ids, uid, search_config=search_config): | ||

| yield "data:" + json.dumps({"code": 0, "message": "", "data": ans}, ensure_ascii=False) + "\n\n" | ||

| except Exception as ex: | ||

| yield "data:" + json.dumps( | ||

| {"code": 500, "message": str(ex), "data": {"answer": "**ERROR**: " + str(ex), "reference": []}}, | ||

| ensure_ascii=False, | ||

| ) + "\n\n" | ||

| yield "data:" + json.dumps({"code": 0, "message": "", "data": True}, ensure_ascii=False) + "\n\n" | ||

|

|

||

| resp = Response(stream(), mimetype="text/event-stream") | ||

| resp.headers.add_header("Cache-control", "no-cache") | ||

| resp.headers.add_header("Connection", "keep-alive") | ||

| resp.headers.add_header("X-Accel-Buffering", "no") | ||

| resp.headers.add_header("Content-Type", "text/event-stream; charset=utf-8") | ||

| return resp |

There was a problem hiding this comment.

🧩 Analysis chain

🏁 Script executed:

#!/bin/bash

# Confirm tenant_id pattern used by async_ask callers elsewhere.

rg -nP -C3 '\basync_ask\s*\(' --type=pyRepository: infiniflow/ragflow

Length of output: 6645

🏁 Script executed:

# Search for mindmap function in chat_api.py around line 973

rg -nP 'def mindmap' api/apps/restful_apis/chat_api.pyRepository: infiniflow/ragflow

Length of output: 86

🏁 Script executed:

# Also check if there's any async_ask call with search_app.get("tenant_id") pattern

rg -nP 'search_app\.get.*tenant_id' --type=pyRepository: infiniflow/ragflow

Length of output: 218

🏁 Script executed:

# Verify logging imports and usage in search_api.py

rg -nP 'logging\.' api/apps/restful_apis/search_api.py | head -20Repository: infiniflow/ragflow

Length of output: 44

🏁 Script executed:

# Get the full completion function from search_api.py to check for logging

sed -n '176,215p' api/apps/restful_apis/search_api.pyRepository: infiniflow/ragflow

Length of output: 1857

🏁 Script executed:

# Check imports at the top of search_api.py

head -30 api/apps/restful_apis/search_api.pyRepository: infiniflow/ragflow

Length of output: 1306

🏁 Script executed:

# Check the mindmap function to see full context

sed -n '964,1000p' api/apps/restful_apis/chat_api.pyRepository: infiniflow/ragflow

Length of output: 1499

Align tenant_id resolution with mindmap handler and add server-side error logging per coding guidelines.

-

Tenant ID inconsistency: The

completionendpoint passesuid(current_user.id) toasync_ask(), while the peermindmaphandler (chat_api.py:973) usessearch_app.get("tenant_id", current_user.id)for model/embedding resolution. If searches are ever shared across tenants, these handlers will diverge. Usesearch_app.get("tenant_id", current_user.id)here for consistency. -

accessible4deletionis being used as a generic "can access" gate for a read path; the naming is misleading (not introduced by this PR, but worth tracking). -

Per coding guidelines ("Add logging for new flows"), the

stream()function's exception handler yields errors only to the client; addlogging.exception(ex)to theexceptbranch so server-side errors aren't lost.

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@api/apps/restful_apis/search_api.py` around lines 176 - 215, The completion

handler currently passes current_user.id (uid) into async_ask and only yields

exceptions to the client; update it to derive tenant_id from the found

SearchService.get_detail(search_id) (use search_app.get("tenant_id",

current_user.id)) and pass that tenant_id to async_ask instead of uid to match

the mindmap handler, and inside the async stream() except block add a

server-side log call (logging.exception(ex)) before yielding the error to the

client; leave the existing accessible4deletion check in place but do not change

its name here.

|

|

||

| # Explore RAGFlow on DeepWiki | ||

|

|

||

| An AI-generated, always-up-to-date knowledge base for understanding RAGFlow's codebase — designed for developers doing secondary development or deep-diving into RAGFlow's internals. |

There was a problem hiding this comment.

Resolve the "always-up-to-date" claim that contradicts the caution note.

Line 11 describes the knowledge base as "always-up-to-date," but line 16 warns that "it may lag behind the latest official release." These statements contradict each other and may confuse readers about the currency of the information.

📝 Proposed fix to align the introduction with the caution note

-An AI-generated, always-up-to-date knowledge base for understanding RAGFlow's codebase — designed for developers doing secondary development or deep-diving into RAGFlow's internals.

+An AI-generated knowledge base for understanding RAGFlow's codebase — designed for developers doing secondary development or deep-diving into RAGFlow's internals.📝 Committable suggestion

‼️ IMPORTANT

Carefully review the code before committing. Ensure that it accurately replaces the highlighted code, contains no missing lines, and has no issues with indentation. Thoroughly test & benchmark the code to ensure it meets the requirements.

| An AI-generated, always-up-to-date knowledge base for understanding RAGFlow's codebase — designed for developers doing secondary development or deep-diving into RAGFlow's internals. | |

| An AI-generated knowledge base for understanding RAGFlow's codebase — designed for developers doing secondary development or deep-diving into RAGFlow's internals. |

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@docs/develop/deepwiki.md` at line 11, The intro sentence currently calls the

knowledge base "always-up-to-date" which contradicts the caution that "it may

lag behind the latest official release"; update the opening line (the phrase

"always-up-to-date") to a reconciled, accurate wording such as "AI-generated,

regularly-updated knowledge base" or "AI-generated, frequently-updated knowledge

base (may lag behind the latest release)" and ensure the phrasing in the initial

description and the caution sentence ("it may lag behind the latest official

release") are consistent so readers are not given conflicting expectations.

| # Validate that id and ids are not used together | ||

| if doc_id and doc_ids: | ||

| raise ValueError("Cannot use both 'id' and 'ids' parameters at the same time.") | ||

|

|

||

| authorization = {"Authorization": auth} | ||

| json_req = {"doc_ids": doc_ids} | ||

| url = f"{HOST_ADDRESS}/v1/document/infos" | ||

| res = requests.post(url=url, headers=authorization, json=json_req) | ||

| params = {} | ||

| if doc_ids: | ||

| # Multiple IDs | ||

| for id in doc_ids: | ||

| params.append(("ids", id)) | ||

| elif doc_id: | ||

| # Single ID | ||

| params["id"] = doc_id | ||

|

|

||

| # Use /api/v1 prefix for dataset API | ||

| url = f"{HOST_ADDRESS}/api/v1/datasets/{dataset_id}/documents" | ||

| res = requests.get(url=url, headers=authorization, params=params) |

There was a problem hiding this comment.

params.append(...) on a dict will raise AttributeError at runtime.

params is initialized as {} on line 96 but line 100 calls params.append(("ids", id)), which is only valid on lists. Every call to get_docs_info(..., doc_ids=[...]) (including the two new call sites in test_chunk.py) will crash with AttributeError: 'dict' object has no attribute 'append'. The doc_id branch is also inconsistent — it mutates the same params as a dict. Use a list of tuples for both branches; requests accepts that for query string building.

Additionally, id shadows the Python builtin — prefer doc_id as the loop variable.

🛠️ Proposed fix

authorization = {"Authorization": auth}

- params = {}

- if doc_ids:

- # Multiple IDs

- for id in doc_ids:

- params.append(("ids", id))

- elif doc_id:

- # Single ID

- params["id"] = doc_id

+ params: list[tuple[str, str]] = []

+ if doc_ids:

+ # Multiple IDs

+ for did in doc_ids:

+ params.append(("ids", did))

+ elif doc_id:

+ # Single ID

+ params.append(("id", doc_id))🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@sdk/python/test/test_frontend_api/common.py` around lines 91 - 107, The

params variable is a dict but the code calls params.append(...) which raises

AttributeError; change params to be a list of tuples (e.g., params = []) so you

can append query pairs for both branches, rename the loop variable to avoid

shadowing builtin (use doc_id_item or similar instead of id), and for the