A shell script for Linux that analyzes file size distribution on a directory and recommends ZFS recordsize settings for different workload types.

./optimal_zfs_recordsize.sh /path/to/dataset- Pipes

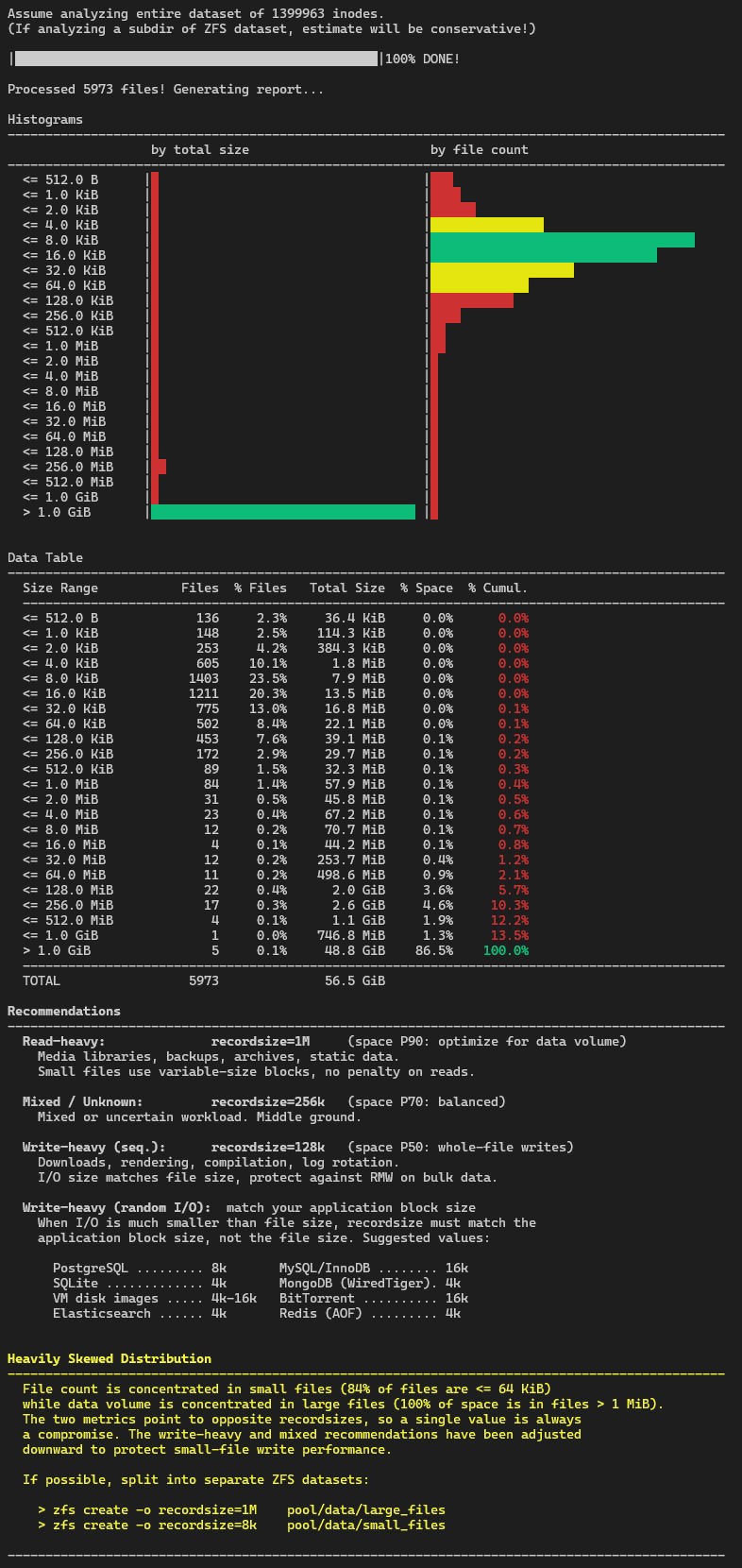

finddirectly intogawk, builds a space-weighted cumulative distribution function (CDF): - finds the bins where the CDF exceeds 50%, 70%, and 90%

- maps the write-heavy sequential case to the bin falling on 50 percentile; the mixed case to P70; and the read-heavy case to P90

- if 60% of files are smaller than 64KiB AND 80% of the total space is in files bigger than 1MiB, it concludes the CDF is heavily skewed and forces the write-heavy seq. suggestion to 128K and the mixed to 256K as compromise. An alert will be shown.

- if all 3 cases match the same suggestion, it will give just one

- in any case the write-heavy random i/o will give always the same suggestion: to match the application block size, not the file size. (For now this case outputs a statica suggestion, maybe in the future I will add a file type detection for databases, we'll see).

- Bash

- GNU Awk (

gawk) - GNU

findwith-printfsupport